Table of Contents

ToggleIntroduction

AI workloads are pushing rack densities from teens to dozens of kilowatts per rack, and that shift forces a new set of cooling decisions. For design engineers planning new campuses, the question isn’t whether liquid will arrive, but how to combine liquid and air in ways that scale predictably, fit local climates, and keep operational risk low. This guide compares alternative liquid approaches with incremental air‑side optimizations, then shows how to use density bands and decision criteria to choose a safe, standards‑aligned path.

Across the industry, optimized air cooling with full containment can often serve 20–40 kW per rack in production; beyond that, most sites turn to liquid augmentation to control chip hot spots and reduce room load. Recent operator surveys and vendor primers echo this pattern, while standards communities such as ASHRAE TC 9.9 and OCP’s Advanced Cooling projects continue to publish guidance on liquid integration and resiliency. For context, 2024 reporting summarized average rack density around the low teens even as high‑density pockets expanded—evidence that “air only” plateaus while AI grows.

Key takeaways

For 40–80 kW per rack with mixed training and inference, a D2C‑first hybrid block is the most adaptable backbone; RDHx remains a practical bridge in brownfields and a complement for non‑D2C rows.

Treat cooling as an architectural choice at block level, not a one‑off row upgrade: standardize manifolds, CDUs, and controls so blocks can repeat.

Climate decides a lot: arid sites often favor closed‑loop liquid plus dry coolers (lower WUE), while temperate/cold sites can target higher return temperatures for heat reuse via heat pumps.

Start with clear density bands and workload mix, then evaluate retrofit constraints and greenfield opportunities. Keep leak detection, coolant quality, and CDU redundancy front and center.

Density and limits

Air-side thresholds today

With modern hot/cold aisle containment and tight airflow management, many facilities sustain roughly 20–40 kW per rack. Exceptional designs may approach ~50 kW for specific rows, but sustained operation above ~40 kW typically requires liquid assistance to avoid thermal throttling and room‑level recirculation. Industry summaries and vendor engineering notes converge on this range; operator surveys reported average densities around the low teens in 2024 even as pockets pushed far higher. Public primers from Vertiv and SubZero Engineering detail how containment improves capacity and efficiency.

According to a 2024 industry summary, average rack density was still near the low teens while high‑density clusters expanded; see the Data Center Knowledge report on density trends: “Data Center Rack Density Has Doubled—and It’s Still Not Enough” (2024).

For practical containment methods that extend air cooling’s useful range, consult Vertiv’s containment strategies explainer (2025) and SubZero Engineering’s hot‑aisle containment overview (2026).

RDHx as a bridge technology

Rear Door Heat Exchangers mount directly to racks and remove a large fraction of server exhaust heat at the source, reducing room load. Passive doors suit moderate loads; active doors with fans and chilled water loops commonly support per‑rack capacities from the twenties up through several dozen kilowatts, with some product lines rated higher under specific conditions. RDHx shines in retrofits because it preserves server form factors and minimizes server‑side changes. It also pairs well with improved containment and targeted airflow fixes.

For indicative capacities and retrofit context, see vendor and operator materials such as Vertiv’s RDHx data sheets/specs (accessed 2026) and Schneider Electric’s retrofit notes citing active door use cases: “Upgrade Legacy Data Centers for AI Workloads with RDHx” (2025).

Liquid and immersion ranges

Direct‑to‑chip (D2C) cold plates remove heat at the processor and other hot components, typically supporting 50–120+ kW per rack today, with warm‑water supply/return temperatures that can be tuned for heat‑reuse strategies. Immersion systems (single‑phase and two‑phase) cover the very high end—commonly 100–200+ kW per rack or tank—while introducing different operational models. Because vendor maxima vary, use these as planning bands, then confirm with project‑specific thermal modeling and manufacturer data.

For D2C envelopes and CDU roles, see nVent’s direct‑to‑chip overview (2025) and Vertiv’s liquid cooling primers on advanced D2C options: “Pumped Two‑Phase Direct‑to‑Chip Cooling” (2025).

For immersion capacity bands, vendor pages provide indicative ranges: Submer SmartPod EXO and EVO families (accessed 2026), GRC AI and case references (accessed 2026), and LiquidStack two‑phase immersion (accessed 2026).

Hybrid cooling paths

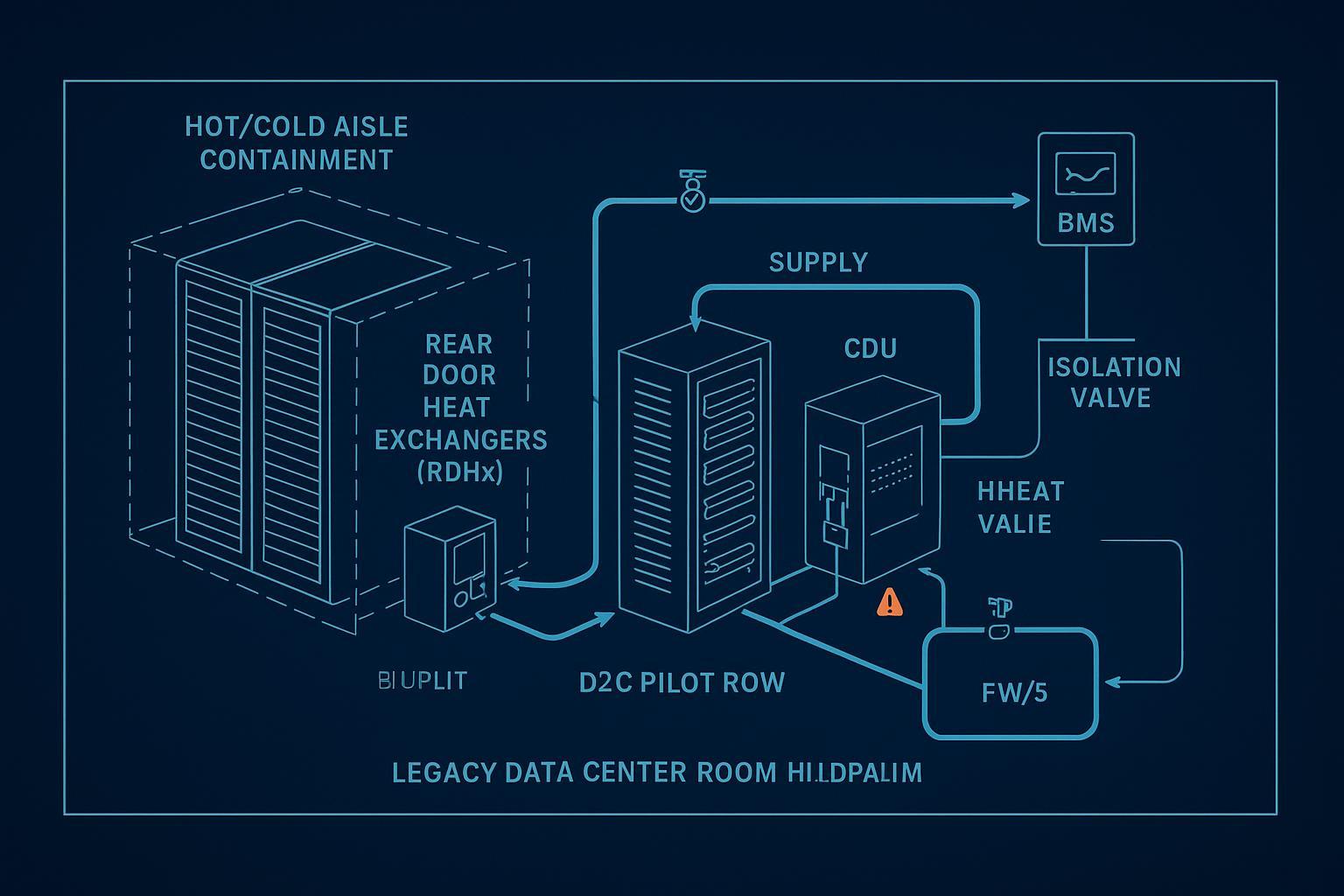

Brownfield increments that work

Begin with airflow hygiene: seal leakage paths, balance tiles, and enforce full containment.

Add RDHx to legacy rows to offload room heat without changing server internals. Verify floor loading and door clearances; plan piping routes and maintenance access.

Pilot D2C rows where AI training or sustained high‑power inference clusters sit. Use a rack‑ or row‑level CDU to separate facility water from the technology cooling system, instrument manifolds for pressure/flow/temperature, and integrate alarms into BMS/DCIM.

These increments raise effective density while building the organizational muscle for liquid operations—SOPs for quick disconnect handling, coolant quality checks, and failure drills. Think of it like learning to drive a manual transmission: you’ll stall a few times in practice, so design the operations runway (training, spares, and isolation valves) before you hit highway speeds.

Greenfield blocks for AI/HPC

For new campuses, design D2C‑first blocks as repeatable units:

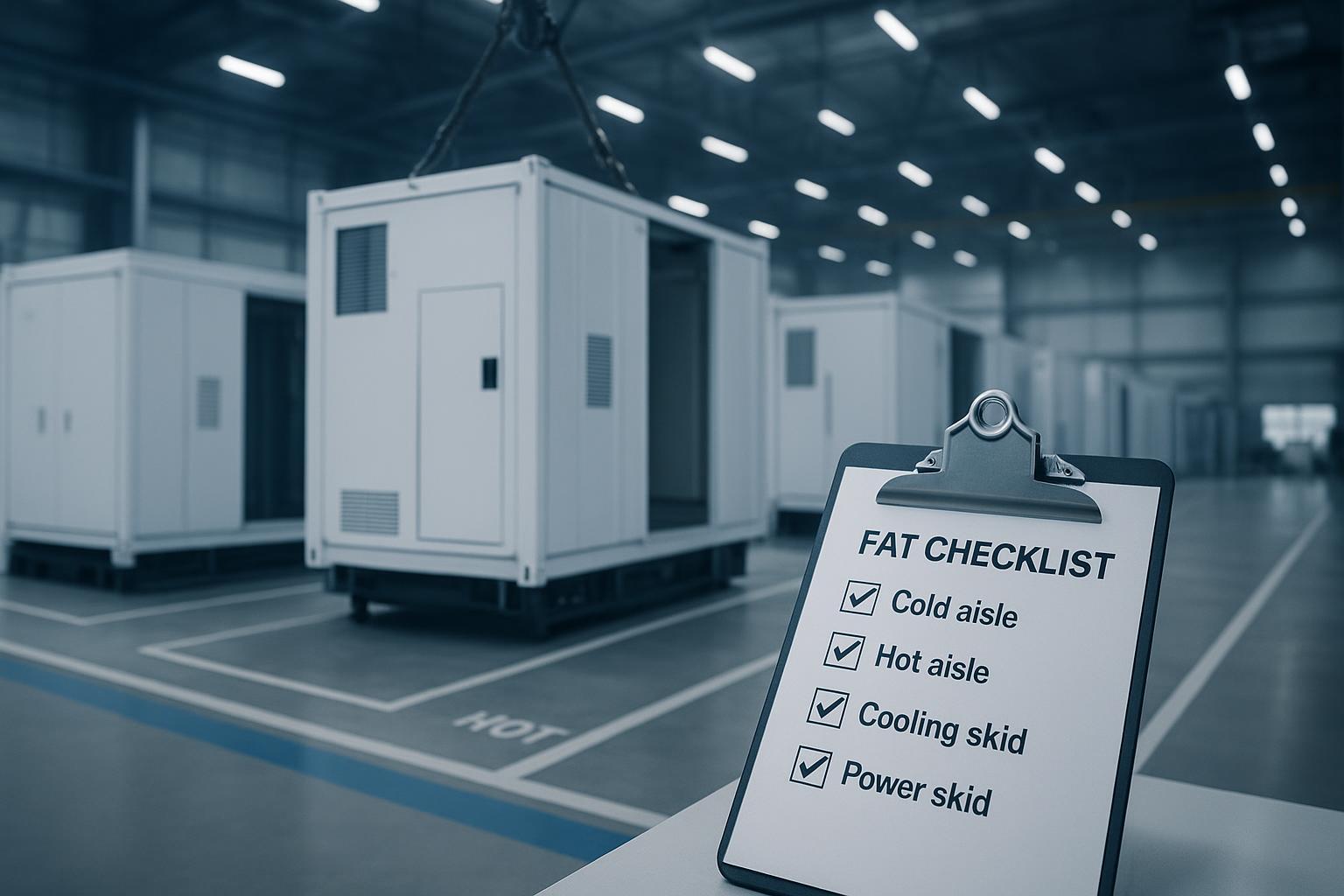

Standardize the manifold architecture (supply/return headers, isolation valves, flow balancing) and CDU sizing with N+1 pumps.

Target warm‑water operation where climate allows (e.g., 30–40 °C supply, higher returns) to improve heat‑reuse feasibility and economization hours.

Reserve RDHx for non‑D2C rows or transitional fleets, so the room remains balanced while the backbone is liquid‑first.

Ensure electrical and controls raceways, leak detection cabling, and service clearances are embedded in the block template.

Operational risks and controls

Leaks and compatibility: choose materials validated for the temperature/pressure envelope; deploy conductivity or optical leak sensors at low points and under manifolds; implement automatic isolation valves on alarm.

Coolant quality: define chemistry and filtration; monitor pH and conductivity; maintain fine filters per vendor guidance.

Redundancy and monitoring: N+1 CDU pumps, sensorized manifolds, pressure/flow interlocks, and staged shutdowns; build playbooks for power or pump failures.

Human factors: dripless quick disconnects, ESD safety, fluid handling SOPs, and change‑control windows.

Decision framework

By density bands and workload mix

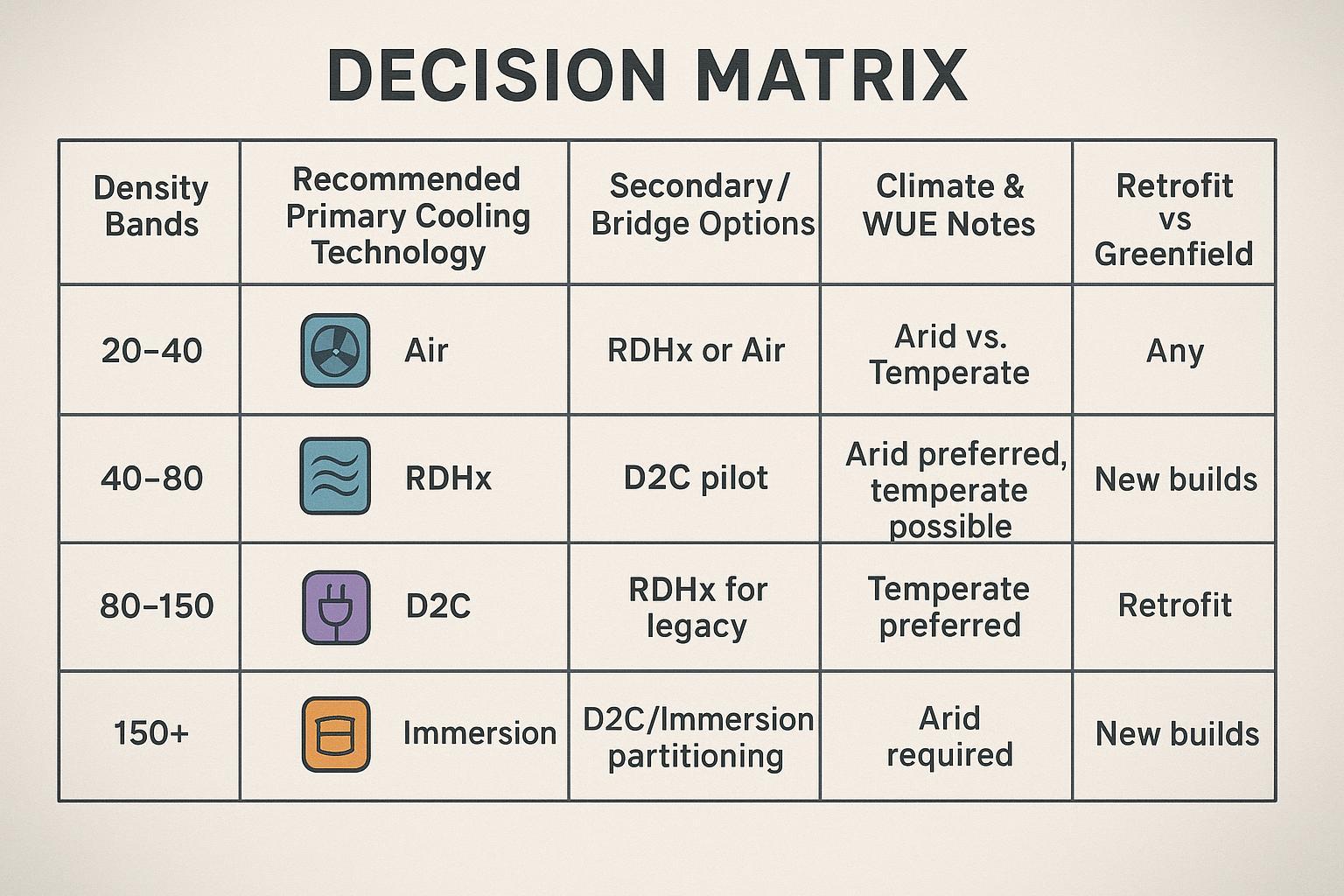

When planning AI data center cooling for mixed training/inference, treat 40–80 kW per rack as the inflection band where D2C becomes the primary path and RDHx plays a supporting role for legacy or adjacent rows. Use the following narrative bands as starting points, then validate with CFD and vendor data:

20–40 kW/rack: Optimized air plus containment; RDHx augmentation where hotspots or future growth are expected.

40–80 kW/rack: D2C‑first hybrid blocks with standardized manifolds and CDUs; RDHx captures residual heat on non‑D2C rows.

80–150 kW/rack: D2C primary with warm‑water loops; RDHx applies only to legacy; plan higher return temperatures for heat reuse.

150 kW+/rack: Immersion or specialized D2C; re‑evaluate layout, service model, and safety/training.

Rhetorical check: If a pilot row hits 55–60 kW/rack and fans are near max, do you want to squeeze the last few kilowatts with airflow tweaks, or anchor a block‑level D2C manifold you can repeat across the hall? The latter usually scales cleaner.

Climate, economization, and WUE

Arid regions: Prioritize closed‑loop liquid with dry coolers to reduce water use (lower WUE). Evaporative economizers may be constrained by water tariffs and scarcity; see WUE primers for trade‑offs such as the Data Center Knowledge guide to WUE (2025).

Temperate/cold regions: Favor warm‑water D2C that drives higher return temperatures and extends free‑cooling hours; pair with heat pumps for district heating where viable. Public examples describe warm‑water outlets being boosted by heat pumps to network temperatures; see AWS Tallaght’s description in “District Heating Using Data Centers” (2024).

Remember that total water footprint also depends on the electricity mix; closed‑loop liquid often reduces onsite water draw, but grid‑embedded water varies by region.

Retrofit versus greenfield choices

Brownfield triggers: persistent hotspots above 30–35 kW/rack after airflow fixes; capacity caps in CRAH/CRAC systems; rising water costs for evaporative systems.

Greenfield triggers: blocks targeting ≥40 kW/rack average; long‑term AI/HPC roadmap; desire for heat‑reuse infrastructure and refrigerant‑compliant chillers post‑2027. For evolving liquid resiliency guidance and interface definitions, track ASHRAE Journal discussions on liquid cooling (2024–2026) and the OCP Advanced Cooling project hub (accessed 2026).

Economics and sustainability

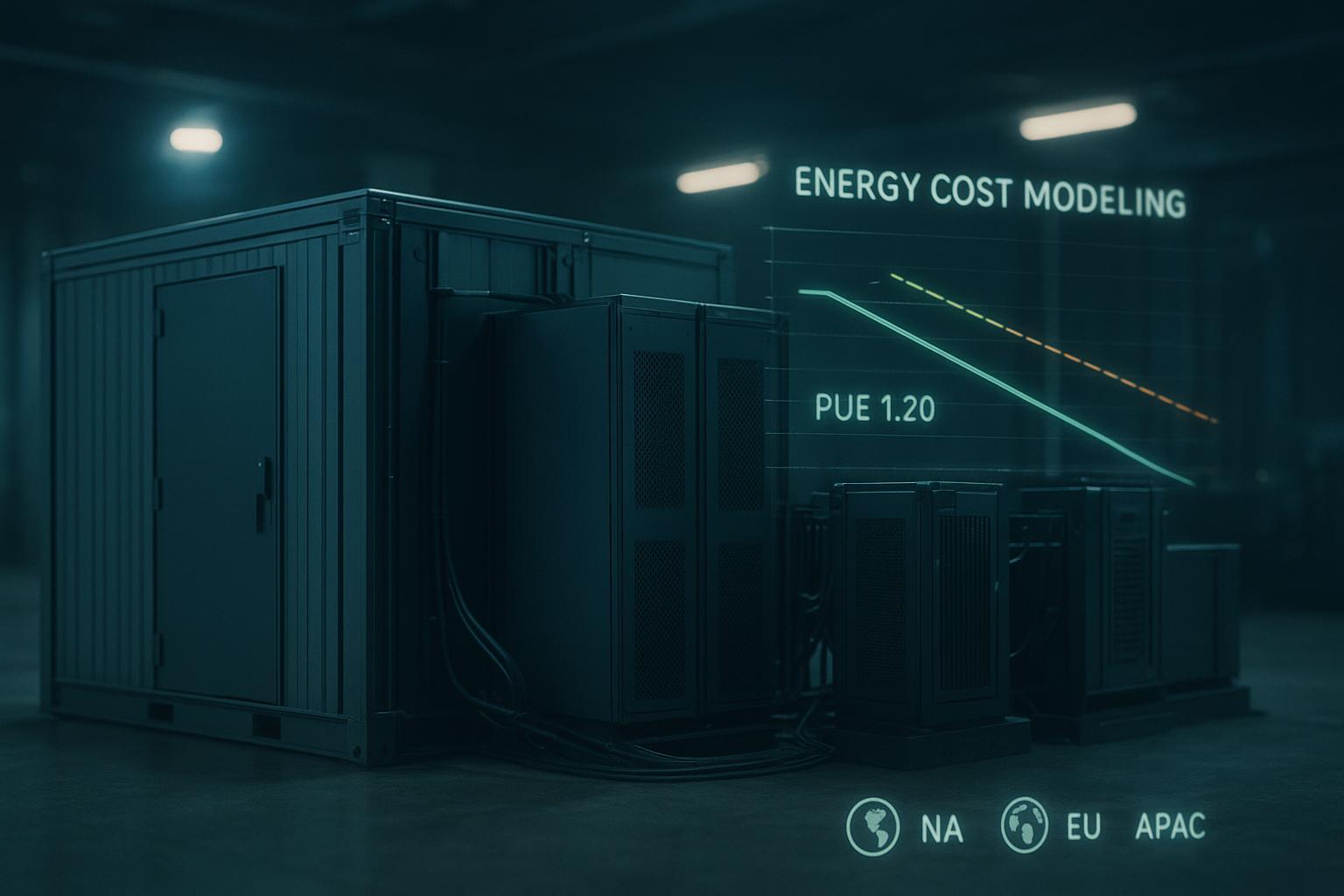

TCO ranges and payback levers

Total cost of ownership hinges on a few controllable vectors: electricity price, water tariffs, climate‑driven economization hours, and density uplift that defers new builds. RDHx can lower room cooling energy quickly in brownfields; D2C reduces fan energy and throttling risk while enabling higher supply temperatures that improve chiller and dry‑cooler efficiency. Use scenario models rather than universal claims, and reflect site‑specific labor and maintenance shifts (e.g., filter changes vs coolant sampling and QD inspections). For methodology background, see vendor primers on D2C and independent articles summarizing RDHx energy effects such as Vertiv’s D2C primer (2024) and DCD’s RDHx myth‑busting opinion (2026).

A worked example approach: take a 10‑rack pilot block at 60 kW/rack average (mixed training/inference). Compare (a) RDHx on all 10 legacy racks, vs (b) five D2C racks plus RDHx on the other five. Model cooling energy, water draw, and space savings from density uplift over a three‑year horizon. The result will vary by climate and tariffs, but this structure exposes the key levers: liquid’s higher capital intensity offset by operating savings and capacity headroom.

Heat reuse and ESG impacts

Liquid loops allow higher return temperatures (often 40–60 °C at the CDU), which improves the feasibility of district heating tie‑ins with heat pumps. Public examples from 2024–2026 show warm‑water data centers delivering ~23–30 °C to an energy center, then boosting to 70–85 °C for networks; see All Things Distributed on AWS Tallaght (2024) and Verne Global’s account of waste‑heat reuse (2024). Where feasible, heat reuse can cut scope 2 emissions and create local benefits; the business case depends on proximity, load factor, and policy incentives.

Supply chain and standards readiness

From 2027 onward, refrigerant regulations in the EU and US push chiller selections toward lower‑GWP blends, which may alter plant configurations and safety code requirements; see Vertiv’s low‑GWP refrigerants white paper (2025) and Danfoss’ AIM Act overview (2023). Standards communities—ASHRAE TC 9.9 and OCP’s Advanced Cooling—continue to mature guidance on liquid resiliency, coolant quality, and interface definitions; track updates via ASHRAE Journal and the OCP specs hub.

Neutral brand example (non‑promotional): In a 40–80 kW/rack module, a D2C‑first block can be procured as standardized manifolds and a row‑level CDU, with RDHx applied to adjacent legacy rows to capture residual heat. A provider such as Coolnet supports modular liquid and hybrid retrofit configurations—cold‑plate solutions for D2C, RDHx options, CDUs, and modular rows—so design teams can align procurement with the D2C‑first architecture while phasing brownfield upgrades. This is a topology example, not a performance claim; verify capacities and controls against project requirements and applicable standards.

Conclusion

For 2026–2030 planning, treat 40–80 kW per rack as the design center for mixed AI loads. Use D2C‑first blocks as your backbone, retain RDHx where it accelerates brownfield relief, and plan for warm‑water operation that supports both efficiency and heat‑reuse options. Near‑term actions include: finish airflow hygiene, pilot a small D2C block with robust SOPs and leak detection, and update the plant roadmap for low‑GWP refrigerants and dry‑cooler compatibility. As standards and supply chains mature, expect clearer interoperable interfaces for manifolds, CDUs, and monitoring—making hybrid paths more repeatable across campuses.