Table of Contents

ToggleIntroduction

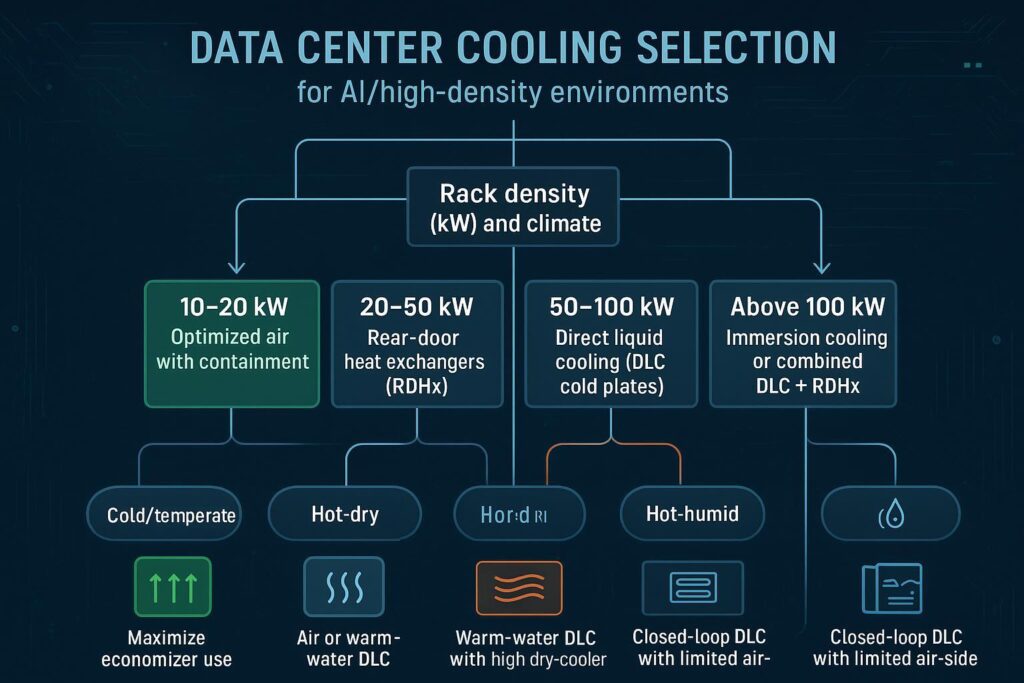

AI and accelerated computing change the thermal equation. GPU-rich racks concentrate heat, raise local heat flux, and push past the limits of legacy room-based air systems. The result is a selection problem—not just “which cooler,” but which method aligns with rack density, climate, facility constraints, and operational risk. This guide frames AI data center cooling as a decision workflow you can take from concept to schematic design.

This guide gives architects and planning consultants a defensible way to choose among optimized air with containment, rear-door heat exchangers (RDHx), direct liquid cooling (DLC/cold plates), and immersion. You’ll get density bands, climate-aware pathways, retrofit versus greenfield patterns, KPIs and sustainability targets, and an implementation roadmap you can take into design development.

Who should read: data center design institutes/consultants (primary), with useful context for MEP/operations, IT/AI platform owners, and procurement/finance. Expected outcome: a shortlist of viable AI data center cooling options per hall, plus the technical parameters to model in your 8760s and schematic design.

Key takeaways

Start with rack kW and heat flux; then filter by climate-driven economizer potential and facility constraints.

Optimized air with strong containment can serve ~10–20 kW/rack in suitable climates; beyond that, capture heat at the rack (RDHx) or the chip (DLC).

RDHx is the most common retrofit bridge for ~20–50 kW/rack; DLC becomes primary for ~50–100+ kW/rack; immersion fits extreme densities or special environments.

Warmer water setpoints in DLC (often ~35–45 °C supply with ~8–12 °C ΔT) expand dry-cooler hours and heat-reuse viability, improving sustainability.

Use standards and hourly modeling: align inlet temps with ASHRAE TC 9.9 classes and evaluate economizer hours per ASHRAE 90.4 methodology.

Selection framework

Define density and heat flux

Begin with the rack’s sustained thermal load and the component-level heat flux. For mainstream air-cooled IT, ASHRAE TC 9.9 recommends 18–27 °C (64.4–80.6 °F) rack inlet temperatures; allowable envelopes vary by class. The U.S. Department of Energy’s 2024 guide reiterates that operating toward the warmer end of the recommended range, when safe for equipment, can increase economizer hours and overall efficiency. See the DOE’s 2024 Best Practices and ASHRAE’s data center resources hub for definitions and context. Refer to the guidance in the U.S. DOE’s 2024 Best Practices Guide for Energy‑Efficient Data Center Design and the 2024 ASHRAE Data Center Resources hub for details: U.S. DOE Best Practices (2024) and ASHRAE’s 2024 Data Center Resources hub announcement.

For density bands, practical thresholds many teams use today are:

~10–20 kW/rack: optimized air with full containment and rigorous airflow management, strongest in cooler climates.

~20–50 kW/rack: RDHx captures exhaust heat at the source and eases retrofit complexity.

~50–100+ kW/rack: DLC (cold plates) becomes primary, often paired with warm-water secondary loops.

100 kW/rack: immersion (single- or two-phase) or advanced DLC hybrids.

These bands align with vendor- and practitioner-reported ranges, including 2024–2026 reporting on RDHx capacities and the rise of direct-to-chip DLC in AI racks, with warm-water operation supporting higher efficiency. For method ranges and practice insights, see summaries from ColdLogik and Supermicro on RDHx, as well as Lenovo’s Neptune materials on warm‑water DLC: ColdLogik RDHx knowledge hub (2024), Supermicro RDHx explainer (2025), and Lenovo Neptune cooling standards (reference, 2024).

Map climate and economization

Cooling choice depends on how many hours you can operate with minimal compression. ASHRAE 90.4 requires 8760‑hour weather modeling to evaluate economizer impacts; that same discipline should guide selection. In cold/temperate climates, air-side economization and optimized air systems can carry ≤20 kW/rack much of the year. In hot–dry regions, DLC with warm-water loops (e.g., ~35–45 °C supply) pairs well with dry coolers to maximize economizer hours. In hot–humid climates, closed-loop DLC with dew-point control is often preferable because humidity constrains air-side operation. See ASHRAE 90.4-2022 Addendum g (approved 2024) and the DOE 2024 Best Practices guide for modeling rationale and setpoint strategies.

Assess facility constraints and risk

Existing buildings dictate what is possible at reasonable cost and risk. Test for: structural loads and rear clearance (RDHx), water routing paths and plate heat-exchanger locations (DLC), power and UPS headroom for pumps/fans, and operations workflows (leak detection, water quality, serviceability). Your risk appetite (e.g., tolerance for liquid at the rack level) and staffing model will also influence selection. Here’s the deal: choosing AI data center cooling without an operations plan almost always backfires at commissioning—bake O&M constraints into the design from day one.

Cooling methods for AI data center cooling

Optimized air with containment

Optimized air remains effective where rack density is modest and climates provide air-side economizer hours. With full hot- or cold-aisle containment, balanced perforated tile layouts (if raised floor), and coil setpoints near the upper end of ASHRAE’s recommended inlet range, many facilities support ~10–20 kW/rack reliably. The DOE (2024) highlights that warmer supply temperatures extend economizer windows without violating equipment recommendations, provided controls maintain even inlet conditions and appropriate dew points. See: U.S. DOE Best Practices (2024) and this engineering overview of TC 9.9’s influence on design practice from Consulting‑Specifying Engineer (2025).

Transition triggers from air to at-rack solutions include persistent hotspots beyond airflow tuning, sustained racks >20 kW, or economizer hours that shrink due to climate—leading to higher fan/compressor energy. For planners comparing AI data center cooling options, this is the point at which RDHx or DLC typically enters the discussion.

Rear-door heat exchangers (RDHx)

RDHx removes heat at the rack exhaust using a water-cooled door (passive or active). It is a strong retrofit path for ~20–50 kW/rack because it captures heat early without fully re-plumbing servers. Reported capacities vary by design, but recent vendor and practitioner materials commonly cite tens of kW per rack, with active doors pushing higher in suitable conditions. For capacity commentary and deployment patterns, see: ColdLogik RDHx hub (2024) and Supermicro’s RDHx explainer (2025).

Direct liquid cooling (cold plates)

DLC transports heat from CPUs/GPUs to facility water via cold plates and a rack or row coolant distribution (CDU/manifolds). For AI racks in 2024–2026, DLC is frequently the primary choice at ~50–100+ kW/rack. Warm‑water operation (often ~35–45 °C supply, ΔT ≈ 8–12 °C depending on design) increases dry‑cooler hours and can raise return temperatures to enable heat‑reuse projects. Lenovo’s Neptune materials and server datasheets describe warm‑water envelopes and high liquid heat capture fractions, with hybrids (DLC + RDHx) approaching whole‑rack capture: see Lenovo Neptune standards (2024) and example platform datasheets such as ThinkSystem SC750 V4 (2025).

Immersion cooling (1‑ and 2‑phase)

Immersion submerges servers in a dielectric fluid (single‑phase or two‑phase). It suits extreme densities (>100–150+ kW/rack‑equivalent) or specialized environments. Trade‑offs include material compatibility, service workflows, and vendor ecosystem maturity. For neutral context on adoption considerations, see this analyst overview from RedChalk Partners (2025).

Retrofit vs greenfield

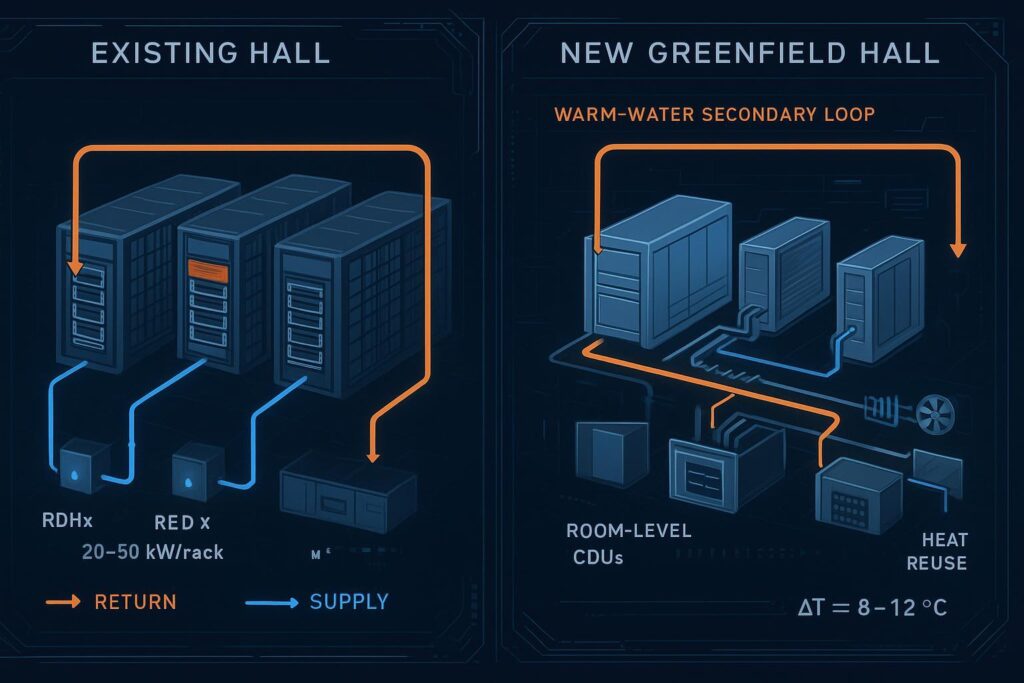

Phased retrofit paths (RDHx and hybrids)

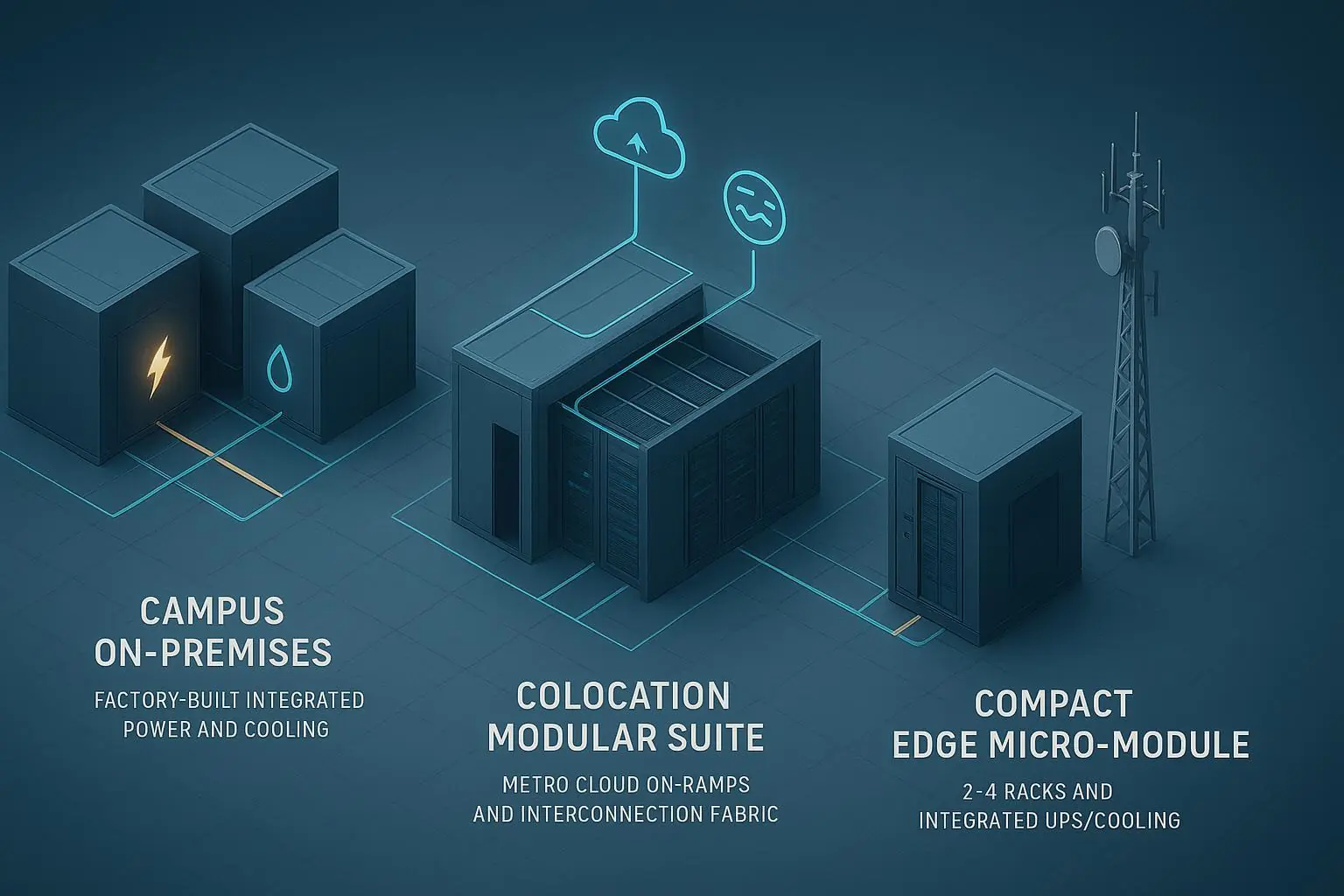

In brownfield halls with mixed densities, a common pattern is to retain optimized air for ≤15–20 kW/rack zones, deploy RDHx for 20–50 kW rows, and introduce selective DLC for AI racks crossing ~50 kW. Validate floor loading and rear clearances for doors, and plan water routing (e.g., row- or rack‑level CDUs) with isolation valves, dripless quick disconnects, and leak detection interlocks.

DLC‑first greenfield patterns

For new AI‑led halls targeting ~50–100+ kW/rack, DLC‑first with a warm‑water secondary loop is now standard. Typical elements include room or row CDUs, supply manifolds with flow balancing at each rack, elevated supply temperatures to maximize dry‑cooler hours, and a control layer that coordinates fan/pump energy with IT load. Position plate heat exchangers and filtration for easy maintenance and water quality management.

When immersion fits (modules/extreme density)

Immersion becomes attractive for very high density, uniform workloads, or when environmental constraints (e.g., dust, altitude, acoustics) favor sealed tanks. It also fits modular deployments or edge sites that benefit from rack/tank‑level isolation. Plan for fluid lifecycle, service ergonomics, and training.

Related reading on modular deployment patterns: see the MetaRow modular data center overview from Coolnet MetaRow Modular Data Center.

KPIs and sustainability for AI data center cooling

Set targets: PUE, WUE, and thermal classes

Define KPI targets early and make method selection serve those targets. Many modern facilities report PUE near ~1.1–1.2 in suitable climates. DOE 2024 treats PUE as the primary efficiency KPI, with WUE expressing water impacts. Thermal classes (per ASHRAE TC 9.9) govern safe inlet conditions; design your controls to keep equipment within the recommended envelope while maximizing efficiency. For definitions and context, see U.S. DOE Best Practices (2024) and Consulting‑Specifying Engineer’s 2025 explainer.

Method | Typical density band | PUE impact (directional) | WUE impact (directional) | Thermal class notes | Economizer/heat reuse notes |

|---|---|---|---|---|---|

Optimized air + containment | ~10–20 kW/rack | Good in cold/mild climates; fan/compressor energy rises as density/climate tighten | Low water if dry economizers; can rise with evaporative/adiabatic | Keep inlets within ASHRAE recommended 18–27 °C | Air‑side economizer hours strong in cold/mild zones |

RDHx | ~20–50 kW/rack | Improves capture efficiency vs room air; lowers fan energy in room | Typically low; depends on heat rejection choice | Maintains air‑cooled IT within envelope by removing exhaust heat | Works with dry coolers; hybridizable with DLC for hotspots |

DLC (cold plates) | ~50–100+ kW/rack | Often best path to low PUE at high density via warm‑water loops | Very low with dry coolers; avoid towers to cut WUE | Liquid classes and adjacent air conditions apply; follow vendor specs | Warm water increases dry hours and heat‑reuse potential |

Immersion | >100–150+ kW/rack‑equiv. | High capture; system PUE depends on pumps/heat rejection | Low if dry rejection; fluid lifecycle factors apply | Vendor‑specific envelopes; outside standard air classes | Suits modular blocks; high return temps aid reuse |

Energy–water trade‑offs by method

Air systems can be capital‑efficient but rely on fans and, in hot/humid climates, mechanical cooling. RDHx reduces room fan energy by capturing exhaust heat. DLC minimizes compressor hours and expands dry‑cooler viability, driving down both PUE and WUE in many locations. Immersion can achieve excellent thermal control but brings fluid management considerations. When evaluating AI data center cooling, document both energy and water implications in the basis‑of‑design.

Heat reuse and economizer hours

Raising supply and return temperatures increases both economizer hours and the feasibility of waste‑heat projects. Industry practice shows dry‑cooler operation is favored when facility supply is roughly in the high‑20s to low‑30s °C with adequate approach to ambient dry bulb; warm‑water DLC pushing returns into the 40s °C further supports heat pumps and district‑heating tie‑ins. See this discussion on hot‑ vs. cold‑water strategies from DatacenterDynamics (2024).

Implementation roadmap

MEP integration: water temps, CDUs, manifolds

For AI racks trending to DLC, specify a warm‑water secondary loop (e.g., ~35–45 °C supply, ΔT ≈ 8–12 °C subject to vendor envelopes). Select room or row CDUs with N+1 pump redundancy sized for full‑load flow and head, and include filtration, water treatment compatible with materials, and stainless plate heat exchangers. Balance manifolds per rack, provide dripless quick disconnects, and instrument branch ΔP and flow for each rack. Controls should coordinate IT load, pump speed, valve positions, and any air‑adjacent fans to minimize total kW.

When illustrating how commercial components fit together, it’s reasonable to reference vendor ecosystems. For example, cold‑plate solutions from Coolnet can be used to connect rack‑level cold plates to a warm‑water CDU and manifolds in a closed secondary loop. Use this type of reference as a design pattern, then validate against your platform vendors’ exact hydraulic and temperature limits. If your deployment is modular, you may also compare patterns shown in Coolnet MetaRow Modular Data Center to inform space and integration planning.

Operations: monitoring, leak detection, maintenance

Instrument at the rack and branch levels: temperature (supply/return), pressure, and flow. Add moisture/leak sensors in trays and at quick disconnects; interlock to close valves and reduce pump speed on detection. Maintain water quality with periodic sampling and filter changes per hours of operation or ΔP. For RDHx, include door maintenance access and rear clearance checks; for immersion, define fluid cleanliness, material compatibility checks, and in‑tank service SOPs. Staff training should cover emergency isolation, draining, and refilling procedures. Think of it this way: fast, reliable isolation is your safety net—test it.

Example decision path for mixed‑density halls

Consider a 2 MW hall with a mix of 12–18 kW legacy racks and new AI rows at 35–70 kW:

Keep legacy rows on optimized air with strict containment and elevated inlet setpoints within ASHRAE recommendations to extend economizer hours.

For 35–50 kW AI rows, deploy RDHx tied to a row‑level CDU, ensuring rear clearance, isolation valves, and leak detection.

For 50–70 kW AI rows, implement DLC with a warm‑water secondary loop. Use room CDUs feeding manifolds per rack, with dripless QDs and branch flow balancing. If residual heat remains in the rack, pair DLC with active RDHx to capture near‑100% of the thermal load.

Run 8760‑hour models to compare dry‑cooler hours under your local TMY3 weather file. Document expected PUE and WUE ranges in the basis‑of‑design and set acceptance criteria for commissioning (e.g., branch flow accuracy, ΔT stability, and leak‑response interlocks). This step cements the AI data center cooling choice in measurable terms.

Conclusion

Selecting the right AI data center cooling approach is a matter of matching rack density and heat flux to climate‑appropriate methods, then integrating MEP systems and controls for safety and efficiency. For 10–20 kW/rack, optimized air with robust containment can remain effective—especially in colder climates. RDHx is a proven bridge for 20–50 kW/rack retrofits. From ~50–100+ kW/rack, DLC with warm‑water loops is the default path, while immersion serves extreme densities and special environments. Anchor your plan in ASHRAE/DOE guidance and 8760‑hour modeling, and you’ll reach a solution that’s efficient, maintainable, and future‑proof.

Immediate next steps and checklist:

Define rack‑level densities and heat‑flux profiles; assign provisional methods per band.

Model economizer hours and WUE/PUE impacts (8760, TMY3) for shortlisted methods.

Validate facility constraints: water routing, structural loads, electrical headroom, and O&M workflows.

Set KPI targets (PUE, WUE, inlet envelopes) and commissioning acceptance criteria.

Shortlist vendors and component ecosystems for CDUs, manifolds, and monitoring; align with server OEM envelopes.

For readers who want to study component integration patterns further, vendor resources such as Coolnet’s cold‑plate liquid cooling overview provide additional product‑level context you can map to your schematic design.

References and further reading (selected):

Definitions and best practices on inlet temps, economizers, and KPIs from the U.S. DOE Best Practices Guide (2024).

ASHRAE’s consolidated Data Center Resources hub announcement (2024) for current standards context: ASHRAE’s 2024 Data Center Resources hub.

Air temperature guidance context and how TC 9.9 shapes practice: Consulting‑Specifying Engineer (2025).

RDHx overview notes: ColdLogik knowledge hub (2024) and Supermicro RDHx explainer (2025).

Warm‑water DLC examples and envelopes: Lenovo Neptune standards (2024) and ThinkSystem SC750 V4 (2025).

Economizer/heat‑reuse discussion: DatacenterDynamics on hot vs. cold water (2024).