ASHRAE TC 9.9 is one of the most referenced sources for defining the environmental envelope that typical IT equipment is designed to tolerate. It helps you answer, in a defensible way: “What inlet temperatures and moisture limits are we designing and operating to?”

Two important boundaries upfront:

TC 9.9 describes envelopes and classes. It does not mandate a specific cooling technology.

Your compliance point is generally at the IT equipment air inlet, not at a random point in the room. ASHRAE calls out ITE inlets as the common measurement point in its thermal guidelines (see the 2011 guidance PDF: ASHRAE TC 9.9 Thermal Guidelines for Data Processing Environments (2011)).

Table of Contents

ToggleASHRAE TC 9.9 server room temperature humidity: the minimum you need to implement

If you do only one thing after reading this guide, make it this: pick an A-class assumption (A1–A4), then set inlet-based targets + alarms that keep you inside the recommended envelope most of the time and tell you early when you are drifting toward the allowable boundary.

Step 1: Know what “recommended” vs “allowable” really means

ASHRAE TC 9.9 defines two envelopes:

Recommended: where most operators try to run most of the time for reliability and operational stability.

Allowable: a wider boundary that varies by class (A1–A4). It is commonly used to understand tolerances for economizer hours and excursions, but it is not the same as a day-to-day operating target.

If you need a plain-English explanation for internal stakeholders, Upsite’s summary is a useful companion: Upsite’s “What is the difference between ASHRAE’s recommended and allowable environmental limits?” (2019).

Key Takeaway: Treat “allowable” as a boundary with conditions, not as permission to set your controls at the edge.

Step 2: Use the TC 9.9 tables to pick a class and targets (A1–A4)

ASHRAE’s Thermal Guidelines reference card is the easiest way to work from the same numbers across teams. For A1–A4 classes, the reference card shows the recommended envelope and the allowable envelopes by class. See: ASHRAE “Thermal Guidelines” reference card (2021, updated 2024). (In later sections below, I refer back to “the ASHRAE reference card” without repeating the link.)

Quick summary: temperature envelopes

Envelope / class | How to use it operationally | Temperature (dry-bulb) |

|---|---|---|

Recommended (A1–A4) | A common day-to-day target band for many air-cooled IT environments | 18–27°C |

Allowable A1 | Narrowest allowable band | 15–32°C |

Allowable A2 | Broader allowable band | 10–35°C |

Allowable A3 | Extended temperature class | 5–40°C |

Allowable A4 | Widest allowable band | 5–45°C |

The numbers above are summarized from the ASHRAE reference card cited earlier. If you want a second framing from an operator-facing source, Envigilance provides a readable overview: “ASHRAE TC 9.9 data center thermal guide” (2026).

Quick summary: humidity envelopes (use dew point, not RH alone)

TC 9.9 expresses humidity limits using dew point plus RH limits. That is deliberate: RH alone can look “fine” while dew point (and condensation risk) is not.

For the exact A1–A4 humidity boundaries, use the tables in the same ASHRAE reference card and (for additional operational context) the TC 9.9 power equipment paper: ASHRAE TC 9.9 Data Center Power Equipment Thermal Guidelines (revised 2016).

Pro Tip: In your monitoring and alarming, treat dew point as a first-class signal. Use RH as a secondary view for human readability.

Step 3: Translate classes into an operations policy (what you actually run)

Most teams end up with three layers:

Design assumption (which class you are willing to tolerate for worst-case events)

Normal operating targets (often centered around the recommended envelope)

Control deadband and alarm bands (to avoid constant hunting and alarm fatigue)

A pragmatic approach for many enterprise environments is:

Choose an environmental class based on equipment mix and risk tolerance (A1 or A2 is common for mixed fleets; A3/A4 usually requires more discipline around moisture, filtration, and vendor envelopes).

Run normal setpoints inside the recommended band most of the time.

Use the allowable band as a boundary for excursions, with clear escalation and time limits.

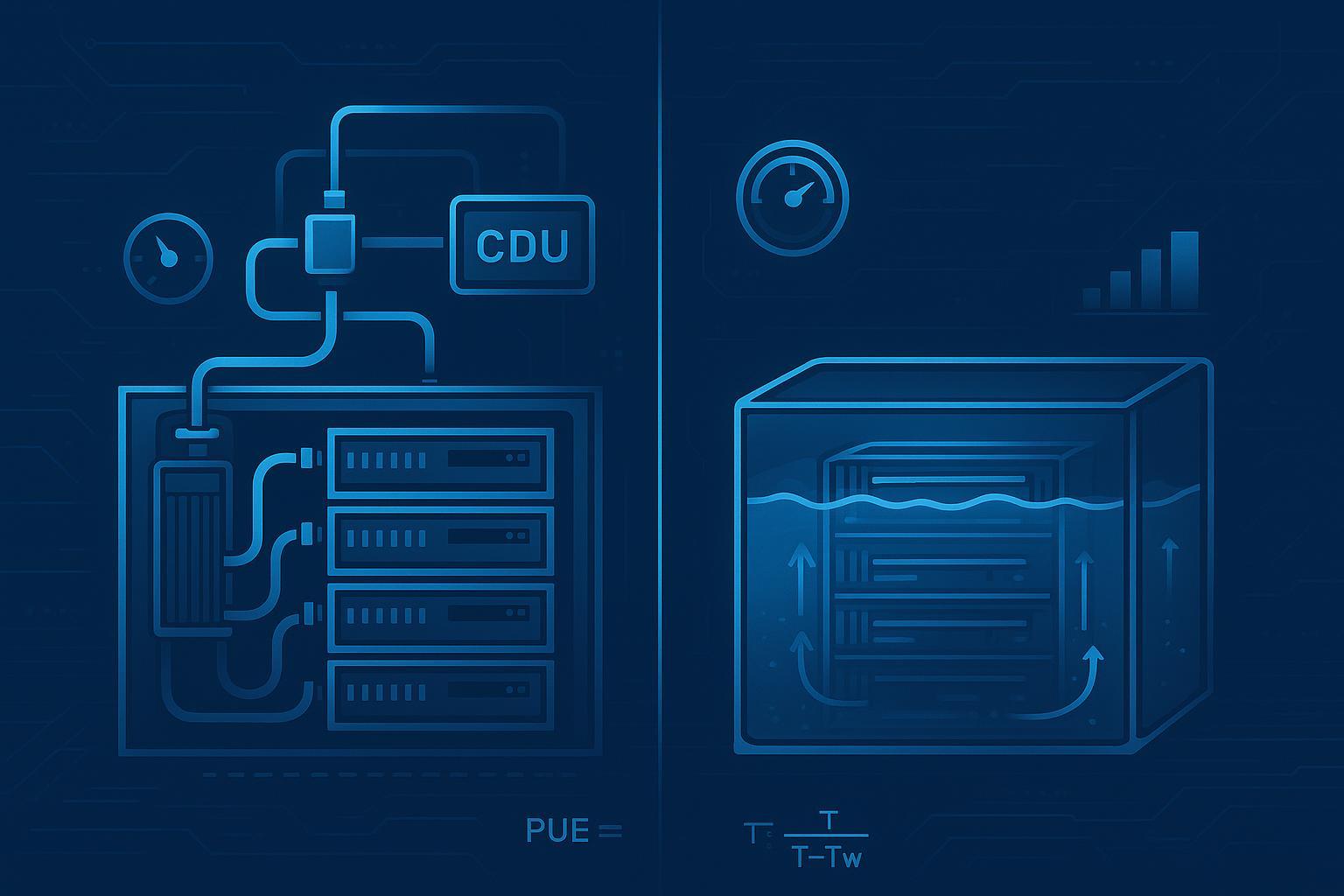

Do these ranges mandate “precision cooling” choices?

No. TC 9.9 does not tell you to buy a specific cooling product. It tells you what inlet conditions your environment should achieve.

Cooling choices depend on:

heat density and airflow management (containment, bypass air)

redundancy and controllability requirements

whether you plan to use economization

how tight your control requirements really are (and why)

If you want a neutral explanation of why room HVAC logic often fails in server rooms, this internal background can help: Precision vs. comfort cooling for server rooms.

Step 4: Build a sensor plan that actually validates compliance

What to measure

At minimum:

Temperature (dry-bulb) at the IT inlet

Humidity with dew point calculation (from temp + RH sensor, or direct DP)

If you are using economization or have large seasonal swings, also track:

supply/return temperatures at the cooling units

outdoor air conditions (if applicable) so you can predict boundary risk

Where to place sensors (practical baseline)

You want enough resolution to catch the failure modes you actually see:

Rack inlets: top, middle, and bottom are a reasonable baseline to capture vertical stratification.

Hot spots: add sensors where airflow is disrupted (end-of-row, near doors, known bypass areas).

Containment boundaries: if using cold-aisle containment, monitor inside the cold aisle; treat the hot aisle as a separate zone.

Vendor DCIM guidance that references this rack-level monitoring approach is common (example: Sunbird on following ASHRAE TC 9.9 thermal guidelines). Use it as implementation inspiration, but anchor your policy to ASHRAE and your equipment envelopes.

Step 5: Set alarms that reflect recommended vs allowable (and reduce noise)

ASHRAE provides the envelopes. Your site needs an alarm policy that turns those envelopes into actions.

A simple pattern that works in practice:

Warning alarms at or near the recommended boundaries (action: investigate, trend, adjust controls)

Critical alarms approaching the allowable boundaries (action: immediate response, load shedding where applicable, change control escalation)

Example alarm bands (template)

Use the actual class tables from the ASHRAE reference card as the hard limits, then set internal bands that fit your risk tolerance. For the underlying envelope tables, see the ASHRAE reference card cited earlier (and referenced here without repeating the link).

Signal | Warning concept | Critical concept | Notes |

|---|---|---|---|

IT inlet dry-bulb temperature | Exiting the recommended band | Approaching the allowable limit for your class | Use time delays to avoid one-minute spikes. |

Dew point | Trending toward condensation risk | Crossing your maximum DP policy | Dew point is often more stable than RH and easier to alarm on. |

RH (secondary) | Approaching corrosion/ESD risk zones | Outside your policy bounds | RH can swing with temperature; avoid over-triggering. |

Rate-of-change | Rapid rise even if still “in range” | Sustained fast change | The TC 9.9 ecosystem discusses change limits in its power equipment guidance (see the 2016 white paper cited earlier). |

Key Takeaway: Alarm governance is as important as the numbers. Without time delays, ownership, and escalation rules, you get alarm fatigue and slower response.

Step 6: Tight stability targets (like ±1°C / ±5%RH): when they’re real, and when they’re self-inflicted

Many teams ask for very tight stability because it “feels safer.” Sometimes it is justified (e.g., sensitive adjacent equipment, poor airflow design that amplifies small changes). Often it is a substitute for fixing airflow distribution.

If you are trying to hold tight stability:

Validate that the requirement is at the ITE inlet, not a room sensor.

Fix airflow management first (containment, blanking panels, sealing bypass).

Use a control deadband that avoids constant hunting.

If your site has frequent excursions, the root cause is often one of:

insufficient sensor placement (you are blind to the real inlet conditions)

poor airflow path (bypass/recirculation)

too-aggressive controls (short cycling)

Step 7: When is humidification required?

Humidification is not “always required” for TC 9.9 alignment. It depends on your climate, economizer strategy, and how low your moisture levels get.

Use the TC 9.9 humidity limits (dew point plus RH) from the ASHRAE reference card as your boundary conditions. If you routinely fall below the minimum moisture requirement, you should treat ESD risk as an engineering problem (flooring, grounding, materials, and humidity control).

For additional context on why humidity guidance is framed the way it is, see the humidity discussion in the TC 9.9 power equipment guidance: ASHRAE’s “Data Center Power Equipment Thermal Guidelines” (revised 2016).

Common mistakes (and how to avoid them)

Confusing room setpoints with inlet compliance

Room sensors can look “in range” while the top of the rack is not. Monitor where the equipment breathes.

Using RH-only alarms

RH alone can mislead. Build dew point into your monitoring.

Treating “allowable” as the operating target

This is how you end up with chronic alerts, ambiguous risk ownership, and warranty arguments.

FAQ (quick answers)

Do the ASHRAE ranges force a specific cooling design?

No. They define inlet condition envelopes. Cooling design is how you achieve those envelopes with your load, layout, redundancy, and economizer strategy.

Should we target the recommended or allowable envelope?

For normal operation, most sites target the recommended envelope and treat allowable as excursion limits. Use the ASHRAE reference card to keep everyone aligned.

Where should we place sensors?

Start with rack inlet sensors (top/middle/bottom) and add coverage at known hot-spot and airflow-disruption locations. Anchor the “why” in ASHRAE’s inlet-measurement concept.

What alarms should we set?

Use a two-tier approach: warning near recommended boundaries and critical near allowable boundaries, with time delays and escalation rules. Include dew point and rate-of-change signals.

Optional appendix: where cooling choices show up

If you need rack/row-level control to manage hot spots and reduce stratification risk, in-row approaches are one common pattern.

If you are evaluating broader strategies for high-density racks and AI loads, see: AI data center cooling ultimate guide.

Next steps

If you want, we can convert the guidance above into a one-page, audit-friendly document your teams can sign off on (targets, sensor map, alarm bands, owners, and escalation).

Request a commissioning checklist template (monitoring points, alarm bands, and acceptance criteria)