If you manage a small server room (or a telecom comms room), it’s common to inherit an “office-style” air conditioner and assume you’re covered. Then the first hot day arrives and you see the pattern: the wall thermostat reads fine, but servers alarm on inlet temperature, or the unit short-cycles, or you discover there are no meaningful alarms at all.

The root issue is simple: server rooms are dominated by sensible heat and continuous duty. Comfort cooling is optimized for people, occupancy schedules, and moisture removal. Precision cooling is optimized for electronics, predictable airflow, and 24/7 environmental stability.

Key Takeaway: Comfort cooling can sometimes work for very small, low-density rooms, but it often fails on the things that actually protect IT equipment: airflow delivery, humidity strategy, continuous duty, and alarms.

Table of Contents

ToggleQuick comparison: precision vs comfort cooling

Criteria | Precision cooling (CRAC/CRAH, InRow, computer-room AC) | Comfort cooling (office HVAC, split/packaged AC) |

|---|---|---|

Design goal | Protect IT equipment in a controlled envelope | Keep people comfortable in occupied spaces |

Heat profile match | High sensible heat ratio (SHR) for IT loads | Lower SHR (expects moisture/latent load) |

Airflow delivery | Higher, more directed airflow where racks need it | Lower, comfort-oriented air distribution |

Humidity approach | Designed around dew point + RH limits; may add humidification | Primarily dehumidifies when cooling; limited humidity strategy |

Filtration | Often higher-grade filtration options | Basic filtration for comfort and coil protection |

Duty cycle | 24/7/365 oriented components and controls | Intermittent/seasonal duty assumptions are common |

Alarms & monitoring | Built for alerts, trend data, and integration options | Often minimal alarms; limited remote monitoring |

Definitions

What is precision cooling?

“Precision cooling” is purpose-built environmental control for IT spaces.

If you’re searching for precision cooling for a server room, this generally means a system designed around IT heat loads, airflow delivery to racks, and 24/7 duty—not the same assumptions as office HVAC.

In practice it includes:

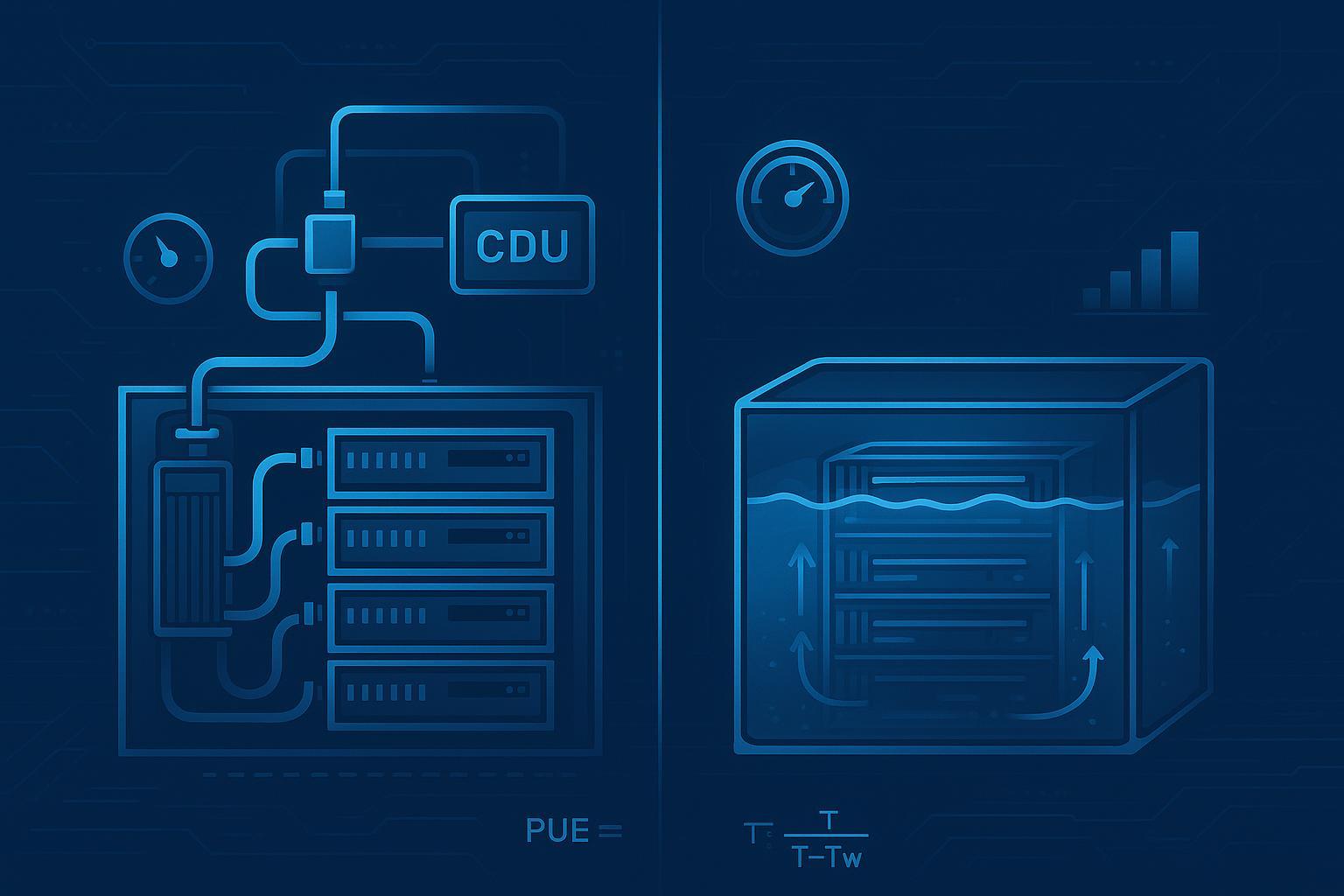

CRAC (Computer Room Air Conditioner): typically DX-based (direct expansion refrigeration) with controls and airflow intended for server rooms.

CRAH (Computer Room Air Handler): typically chilled-water based, also designed for IT environments.

InRow cooling: units placed close to racks (row-level) to shorten the airflow path and reduce mixing.

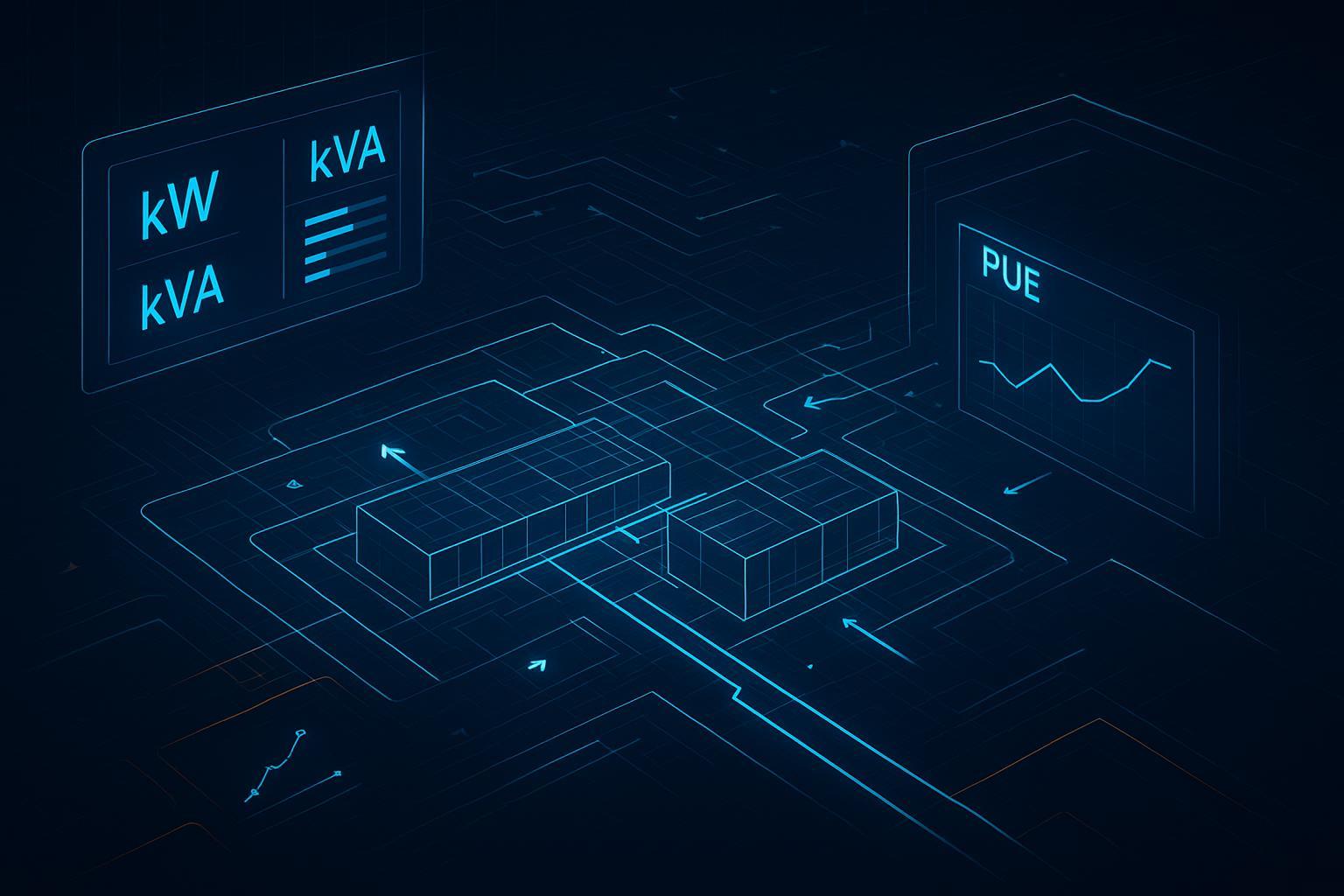

Precision systems prioritize stable inlet conditions at racks, airflow management, humidity strategy (often expressed using dew point limits), and alarms/monitoring.

What is comfort cooling?

“Comfort cooling” is conventional HVAC intended for human-occupied spaces (offices, retail, general building areas). It’s typically optimized for:

mixed sensible + latent loads (people add moisture)

occupancy schedules

broad room mixing for occupant comfort

In a server room, those priorities can be misaligned with what IT equipment needs most.

Precision cooling vs comfort cooling: SHR and capacity match

SHR (Sensible Heat Ratio) is the fraction of total cooling that goes to lowering temperature (sensible) instead of removing moisture (latent).

IT equipment loads are typically mostly sensible heat.

Comfort systems are often selected for spaces where latent load matters (people, ventilation air, infiltration).

So, even when a comfort unit’s nameplate capacity looks similar, its capacity may be “spent” differently—especially under humid outdoor conditions.

Practical implication for small server rooms: when a comfort unit runs, it may drive humidity down (because it dehumidifies while cooling), and when it cycles off, humidity can rebound quickly. Precision systems are more likely to be specified with a coherent humidity strategy for year-round operation.

Airflow: the overlooked reason comfort systems fail

In server rooms, many issues that look like “not enough cooling” are actually not enough airflow in the right place.

Comfort cooling generally aims to keep the room comfortable through mixing. Server rooms need you to deliver adequate airflow to the rack inlets, reduce bypass/recirculation, and maintain predictable return paths.

What to look for (small rooms)

Supply/return path clarity: where does cold air leave the unit, and how does hot air return?

Short-circuiting risk: can supply air go straight back to return without passing through racks?

Rack inlet focus: are the hottest racks getting the airflow they need?

⚠️ Warning: A wall thermostat can be “happy” while rack inlets overheat. For server rooms, inlet temperature is often the metric that matters.

If you want a deeper sizing-and-layout perspective, you can cross-check typical selection considerations in Coolnetpower’s guide on choosing and configuring precision air conditioning for server rooms.

Server room temperature and humidity: stop thinking “RH only”

Humidity in IT spaces is best understood using both:

dew point (how much moisture is in the air), and

relative humidity (RH) (how close the air is to saturation at a given temperature)

ASHRAE TC 9.9 publishes guidance with recommended and allowable envelopes for IT equipment using temperature plus moisture limits (dew point and RH).

Recommended vs allowable: typical ASHRAE ranges

ASHRAE expresses guidance by equipment class and uses temperature plus moisture limits. Two common views you’ll see in TC 9.9 reference materials:

Recommended (typical): 18 to 27°C (64 to 81°F) for many air-cooled IT environments (ASHRAE TC 9.9 guidance).

Allowable (example, Class A1 typical): 15 to 32°C (59 to 90°F), with moisture limits defined using dew point and RH boundaries.

See ASHRAE’s TC 9.9 thermal guidelines reference materials and the Thermal Guidelines reference card (5th edition) for the current framing and class details.

For small server rooms, it’s often wise to operate near recommended and use allowable as a boundary condition (especially during abnormal events), while still verifying the exact requirements for your specific IT equipment.

Pro Tip: When writing requirements, specify that setpoints should be maintained within ASHRAE “recommended” during normal operation, with alarms and response procedures when drifting toward “allowable.”

Filtration and cleanliness: not just a comfort issue

Dust and particulates can build up on coils, reduce heat transfer, and contribute to hotspots and maintenance events. Comfort systems often use filtration intended mainly to protect the unit and provide basic indoor air quality for occupants.

For server rooms, specify filtration as part of the reliability plan (and treat filter changes as a maintenance item with logs).

24/7 duty and reliability: what changes in equipment selection

A small server room might be physically small, but the cooling requirement is often nonstop.

Precision cooling is typically specified with:

controls designed for continuous operation

serviceability expectations aligned with critical environments

options for redundancy planning (even if the room starts with N, you can plan for N+1 pathways)

Comfort cooling can be reliable, but it’s often installed and maintained as a general building asset. That difference shows up in how failures are detected, how fast response happens, and whether the system is treated as mission-critical.

Alarms and monitoring: the difference between “failure” and “incident”

In many real outages, cooling didn’t fail silently—it failed without actionable notification.

A precision-cooling approach typically expects:

temperature and humidity alarms

sensor placement that reflects rack inlets (not only room averages)

remote notifications (so someone knows before IT equipment is impacted)

integration pathways into site monitoring (often via DCIM/BMS or equivalent)

If monitoring is part of your roadmap, the data and alerting checklist in Data Requirements for AI Thermal Optimization is a useful way to think about “what to measure” even if you’re not using AI.

Where comfort cooling commonly breaks down

Short cycling: the thermostat reaches setpoint quickly, the unit cycles, and racks see unstable inlet conditions.

Poor airflow distribution: cold air doesn’t consistently reach the hottest rack inlets.

No humidification strategy: winter/dry seasons can create overly dry conditions; summer operation can create swings.

Limited alarms: problems are discovered by IT alarms (too late), not by the cooling system.

Maintenance blind spots: comfort equipment may not be maintained on a critical-environment schedule.

A practical checklist: minimum requirements for a small server room

Use this as an RFP or retrofit checklist for rooms in the 1–10 rack range.

Define control points at rack inlets, not only “room temperature.”

Specify an operating envelope aligned with ASHRAE TC 9.9 recommended, with alarm thresholds as you approach allowable.

Confirm airflow path: supply delivery to rack fronts and a clear hot-air return.

Confirm the SHR/latent behavior is appropriate for an IT-dominated load (avoid designs that unintentionally over-dry the space).

Require actionable alarms (temperature, humidity/dew point, unit fault) and a notification path.

Document maintenance: filters, coils, condensate management, sensor calibration.

Plan for resilience: at minimum, a response plan for cooling failure; ideally, a redundancy strategy.

So… which should you use?

For small, low-density rooms with low criticality, comfort cooling can be a pragmatic starting point if you can validate airflow, monitoring, and humidity behavior.

For any room where uptime matters, or where you see recurring hot spots, humidity swings, or “surprise failures,” precision cooling is typically the safer engineering approach because it is designed around IT load characteristics and operational expectations.

How Coolnetpower helps

Coolnetpower supports server-room projects by helping teams:

translate IT load and layout into cooling requirements and airflow assumptions

define monitoring/alarm points and integration expectations (DCIM/BMS)

evaluate room-based vs row-based approaches for small rooms

If you’re updating a comms room or small server room, start with the selection basics in how to choose precision AC for server rooms and, for integration considerations, review DCIM/BMS integration guidance.

Next step (low-friction): Request a server-room cooling requirements checklist (setpoints, sensors, alarms, and airflow assumptions) or book a short technical fit call to sanity-check your current setup.