Edge and micro-modular data centers don’t fail because the power or cooling hardware is “bad.” They fail because the operational stack is fragmented: one dashboard for IT, one for facilities, one for physical security—and no shared event model.

This guide is a decision-stage playbook for integrating edge modules into an existing DCIM + BMS + security ecosystem using open protocols, a unified alarm schema, and zero-trust remote access patterns. If you’re evaluating vendors, treat this as an acceptance-testable integration scope—not a marketing checklist.

Table of Contents

ToggleKey takeaways

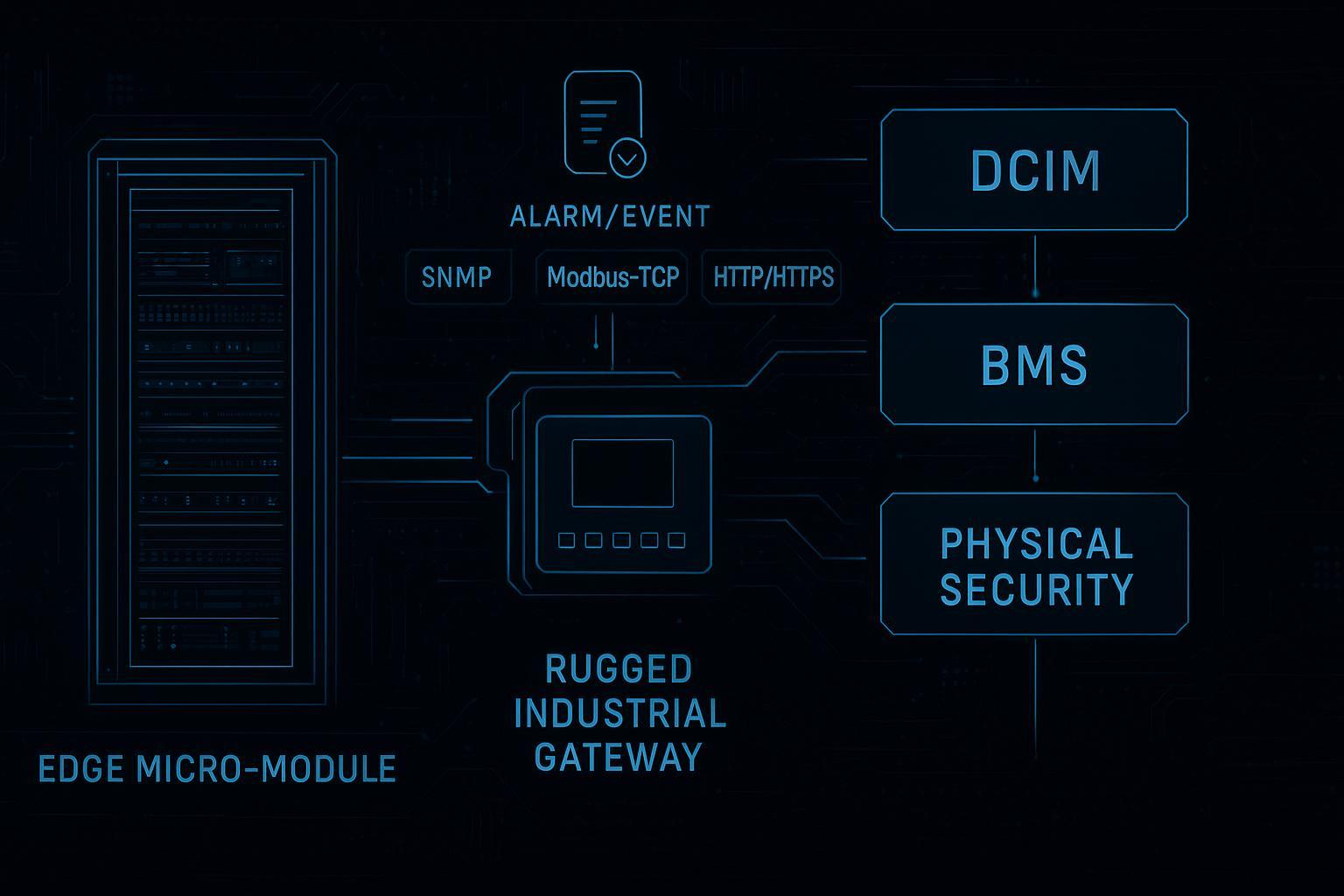

A clean integration starts with a reference architecture that separates southbound device collection from northbound enterprise integration.

Use protocols by domain: SNMP is common for IT monitoring, Modbus for power equipment, and BACnet for BMS/HVAC—then normalize data before alarming.

Alarm floods are a design problem. Standardize a taxonomy, map severities, and deduplicate/correlate before sending tickets.

Zero-trust is not “add MFA to a VPN.” It’s granular access, strong identity, segmentation, and audit logging.

Northbound gateway interfaces (SNMP/HTTP/Modbus) are enough to support most edge data center monitoring integration workflows—if your mapping is explicit and testable.

Prerequisites (what you should have before you start)

A clear system boundary for the edge module (what is “inside the module” vs what is “site” vs what is “enterprise”).

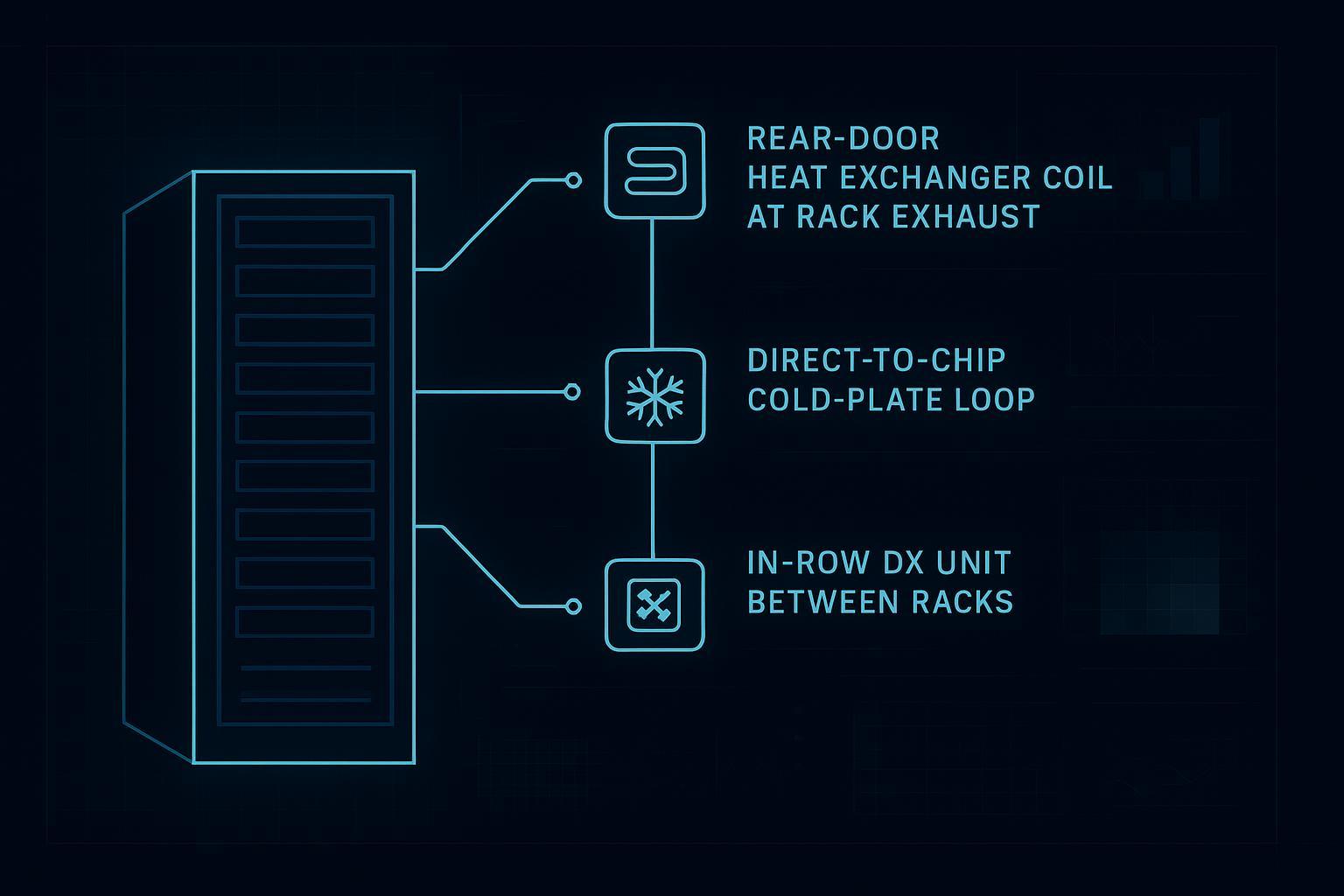

Asset inventory: power chain components (UPS/PDU/branch circuits), cooling units, sensors, access points, cameras.

Network segmentation plan: at minimum, separate management/OT from corporate IT; document allowed northbound flows.

A target ticketing/work-order destination (ITSM or NOC tooling) and ownership model.

Pro Tip: Treat DCIM BMS integration as a deliverable with acceptance criteria (data completeness, alarm fidelity, security controls)—not as an “extra task” during commissioning.

Step 1 — Define your DCIM BMS integration reference architecture (southbound vs northbound)

Input: edge module BOM, network diagram, and monitoring objectives.

Action: document the integration in two planes:

Southbound (device → gateway/DCIM collector): how the module’s devices expose telemetry and control signals.

Northbound (gateway/DCIM → enterprise): how alarms, metrics, and events feed DCIM, BMS, security platforms, and ticketing.

Output: a one-page “integration contract” that lists sources, destinations, and the exact data exchanged.

Done when: every device class has an identified protocol and owner (IT, facilities, security), and every northbound integration has a named endpoint.

Step 2 — Choose open protocols based on the system you’re integrating

Don’t pick a “universal protocol.” Pick the simplest protocol that matches the domain, then normalize.

Domain | Common systems | Typical protocol(s) | Notes |

|---|---|---|---|

IT monitoring | network gear, intelligent rack PDUs | SNMP (prefer SNMPv3) | Good for polling + traps; align MIBs and object names |

Power equipment | UPS, meters, ATS, switchgear, environmental sensors | Modbus RTU/TCP | Simple and common; design segmentation and compensating controls |

BMS/HVAC | CRAC/CRAH, chillers, building controllers | BACnet | Use secure patterns where available; consider BACnet/SC for TLS + cert auth |

Northbound integration | DCIM → NOC/ITSM/SIEM | HTTP/HTTPS APIs, SNMP, message buses | Favor explicit schemas and idempotent event delivery |

For BMS security hardening, BACnet has a modernization path: Veridify summarizes BACnet Secure Connect (BACnet/SC) as using TLS encryption and certificate-based device authentication (Veridify BACnet/SC overview).

Done when: every protocol selection has a reason (ecosystem fit, ownership, security), and you can defend it in a vendor review.

Step 3 — Create a minimum viable telemetry model (naming, units, timestamps)

Before you design alarms, define the telemetry contract.

Minimum fields per point:

site_id,module_id,asset_idpoint_name(consistent naming convention)value,unit,qualitytimestamp(and time source)

Normalization principle: normalize telemetry as early as possible in the pipeline. Modius argues that the fewer steps before normalization occurs, the lower the risk of inconsistent raw data bleeding into alarms and reports (Modius “Normalizing Normalization”).

Done when: power is in consistent units (for example kW), temperatures are consistent (°C or °F), and you can reconcile timestamps across devices.

Step 4 — Build an alarm schema (and ticket mapping) before you connect ITSM

If you integrate alarms first and think about tickets later, you’ll end up with human fatigue and SLA noise.

4.1 Start with a shared alarm taxonomy

At minimum, use these alarm classes:

Power (UPS on battery, overload, breaker trip)

Thermal/Environment (high inlet temperature, leak, humidity out of range)

Availability/Comms (device offline, polling failures)

Physical security (door forced, unauthorized access, motion after hours)

Safety (smoke/fire linkage, emergency shutdown)

4.2 Map severities to actions, not feelings

A workable severity scale is one where each level has:

a response time

an owner

a runbook pointer

Example:

Severity | Meaning | Example | Ticketing action |

|---|---|---|---|

SEV1 | Immediate service risk | cooling failed + rising inlet temp | page on-call + open incident |

SEV2 | Degraded redundancy | one UPS module failed | open incident + schedule field dispatch |

SEV3 | Needs attention | sensor drift, recurring comms errors | create work order |

SEV4 | Informational | maintenance reminder | log only |

4.3 Deduplicate and correlate before you page humans

Edge sites are noisy. If a single upstream event triggers 30 downstream alerts, the integration is wrong—not the operators.

Implement:

deduplication window (same asset + same alarm type within N minutes)

root-cause correlation (if upstream power is lost, suppress dependent “device offline” alarms)

stateful alarms (require clear/return-to-normal events)

Done when: you can simulate a power-chain failure and see a small, actionable set of alarms—not a flood.

This is the core of alarm normalization and ticketing DCIM should support: a consistent event model that maps to your ITSM categories, priorities, and owners.

Step 5 — Implement zero-trust remote access (including vendors)

This is the operational security layer for zero trust remote access for OT monitoring at distributed edge sites.

Your integration design must assume remote access will happen—especially for edge modules with limited on-site staff.

A pragmatic zero-trust control set:

Identity-first access: SSO + MFA for all operators and vendors

Least privilege (RBAC): role-based policies for “view telemetry,” “acknowledge alarms,” “change thresholds,” “remote control”

Segmentation: separate OT management networks; only allow explicit northbound flows

Just-in-time access: time-bounded vendor access windows

Audit logging: session logs and change logs for configuration actions

For remote access, many organizations are moving from broad network-level VPN access to ZTNA patterns that provide granular access with continuous verification (see Cato Networks on ZTNA vs VPN and Secomea on zero-trust remote access for industrial networks).

Done when: you can show an auditor “who accessed what, when, and what changed,” and a compromised vendor account can’t laterally move across the module.

Step 6 — API/protocol mapping example (Coolnetpower gateway → enterprise stack)

This section shows how to write an integration mapping that is explicit enough to test.

Coolnetpower’s product page for CoolnetDCIM describes Modbus (RTU/TCP) and SNMP support, plus HTTP/HTTPS network interfaces and alarm reporting upstream.

6.1 Example: northbound SNMP (traps or polling) for NOC visibility

Use when: your NOC tooling expects SNMP traps or you need broad compatibility.

Mapping concept:

Asset class: UPS / PDU / sensors

SNMP objects: device status, load, temperature, door contact

Event: trap on threshold breach → NMS correlates → ticket created

Implementation notes (procurement-friendly):

Require SNMPv3 where possible (auth + encryption)

Require MIB documentation and a test plan for traps vs polling intervals

This is the concrete heart of a SNMP Modbus integration gateway design: define exactly which metrics and events cross the northbound boundary, and how they map to tickets.

6.2 Example: northbound Modbus-TCP to upstream BMS/EPMS gateways

Use when: the facility environment is standardized on Modbus registers.

Mapping concept:

Register map: power metrics (kW, kWh), environmental points (temp/humidity), digital inputs (door status)

Data quality: define scaling and units in the register map

Implementation notes:

Put Modbus on a segmented network; document allowed masters and polling rates

Validate scaling during commissioning (common source of “false alarms”)

6.3 Example: northbound HTTP/HTTPS for dashboards and event export

Use when: you need a web portal for operations and an API-style export path.

Mapping concept:

Endpoint categories: assets, telemetry, alarms, users

Export model: “new alarm,” “alarm cleared,” “threshold updated” events

Implementation notes:

Ensure time sync and idempotent event handling (no duplicate tickets)

Enforce IP filtering + strong authentication; log all config changes

⚠️ Warning: Do not commit to protocol support (BACnet, OPC UA, ONVIF, etc.) for a specific gateway unless it is explicitly documented by the vendor. Use generic protocol guidance for architecture, and vendor sources for product claims.

Step 7 — Commissioning and ongoing operations: acceptance tests you can run

Use a test plan that matches real failure modes.

Acceptance tests (minimum set)

Test | What you simulate | Pass criteria |

|---|---|---|

Telemetry completeness | disconnect a sensor class | missing points are detected and surfaced |

Alarm fidelity | threshold breach + clear | one actionable alarm + clean clear event |

Alarm flood control | upstream outage | correlated suppression prevents NOC flood |

Remote access audit | vendor JIT session | full session + change logs recorded |

DR/Failover drill | WAN loss to edge site | local buffering and safe degraded mode |

Done when: the module can operate safely if upstream connectivity is lost, and your team can prove it with logs.

Common failure modes (and how to prevent them)

Naming drift across systems → enforce a point naming standard and a CMDB/asset map.

Unit mismatch (kW vs W; °C vs °F) → normalize at collection and validate during commissioning.

Ticket storms → correlation rules and dedup windows; align severities to actions.

Security bolt-on → build segmentation and identity policies before enabling remote access.

Next steps

If you want a procurement-ready package for an edge rollout, start with:

a one-page reference architecture

a protocol mapping table (by asset class)

a minimum viable alarm schema + severity matrix

a commissioning test checklist

For a product-backed monitoring layer, see Coolnetpower’s DCIM portfolio under Coolnet DCIM solutions.

To explore how a micro-module can ship with integrated monitoring, reference architectures are often easiest to explain using concrete module examples such as Coolnetpower’s MetaRack micro data center cabinet solution and MetaRow modular data center solution.