If you’re trying to bring 200 kW–1 MW of capacity online in 4–6 months, the “best” option isn’t the one with the lowest theoretical build time—it’s the one with the least variance on the critical path.

Before comparing options, define the constraint clearly:

Go-live definition (used in this post): time to first IT load / first production racks energized and in service.

Assumptions: some high-density racks (≥20 kW/rack) are in scope; compliance evidence (e.g., certifications and test documentation) matters; the retrofit path assumes you must reuse an existing building.

Table of Contents

ToggleScorecard summary: modular vs colocation vs retrofit (4–6 months to first IT load)

The table below is intentionally blunt: it’s meant to help you decide what to diligence first.

Criterion | Modular / prefabricated (owner-operated) | Colocation (retail/wholesale) | Retrofit (reuse an existing building) |

|---|---|---|---|

Lead time to first IT load | Medium (can be 4–6 months if site prep/permitting runs in parallel and long-lead power gear is available) | Fastest if capacity is available; the risk shifts to connectivity and migration execution | Slowest / variable; can be quick for limited upgrades, but high risk of overruns for power/cooling modernization |

Schedule risk | Medium (site readiness + logistics + commissioning gates) | Medium (provider capacity certainty + carrier lead times + contractual boundaries) | High (unknown conditions + sequencing + commissioning + downtime constraints) |

Density for ≥20 kW/rack | Good if engineered for it (often requires row-based or liquid cooling) | Depends on the facility design and the contract for high-density support | Often limited by legacy power/cooling distribution unless you invest heavily |

Scalability | Good for incremental growth if the architecture is modular and standardized | Good for scaling within a metro if inventory exists; multi-site consistency varies by provider | Limited by the building’s constraints; portfolio-scale repeatability is hard |

Lifecycle cost (directionally) | CapEx heavy, but predictable once standardized; ongoing ops are yours | OpEx heavy; avoids CapEx but costs show up in recurring fees + cross-connects + services | Can look cheaper upfront, but overruns and operational disruption can dominate |

Key Takeaway: For a 4–6 month target, the decision is less about “modular vs colo vs retrofit” and more about what will be your first bottleneck: power train, permits, commissioning windows, or network circuits.

Lead time: what actually fits in 4–6 months

A 4–6 month plan usually succeeds when you can parallelize workstreams and avoid long-lead surprises.

Colocation: fastest when inventory and circuits line up

Colocation can be the shortest path to first IT load because the building, MEP infrastructure, and security model already exist. But enterprise teams routinely miss one timeline item: carrier circuit provisioning.

Data Center Knowledge notes that carrier circuits can take ~90 days—often longer than cabinet installation, power strip energization, and security access, which may be completed in under a month (see Data Center Knowledge’s “Four Common Mistakes New Colocation Data Center Users Make”).

Practical implication: if your go-live depends on new circuits, don’t treat connectivity as a parallel nice-to-have. It can become the critical path.

Modular / prefabricated: schedule compression comes from parallel work

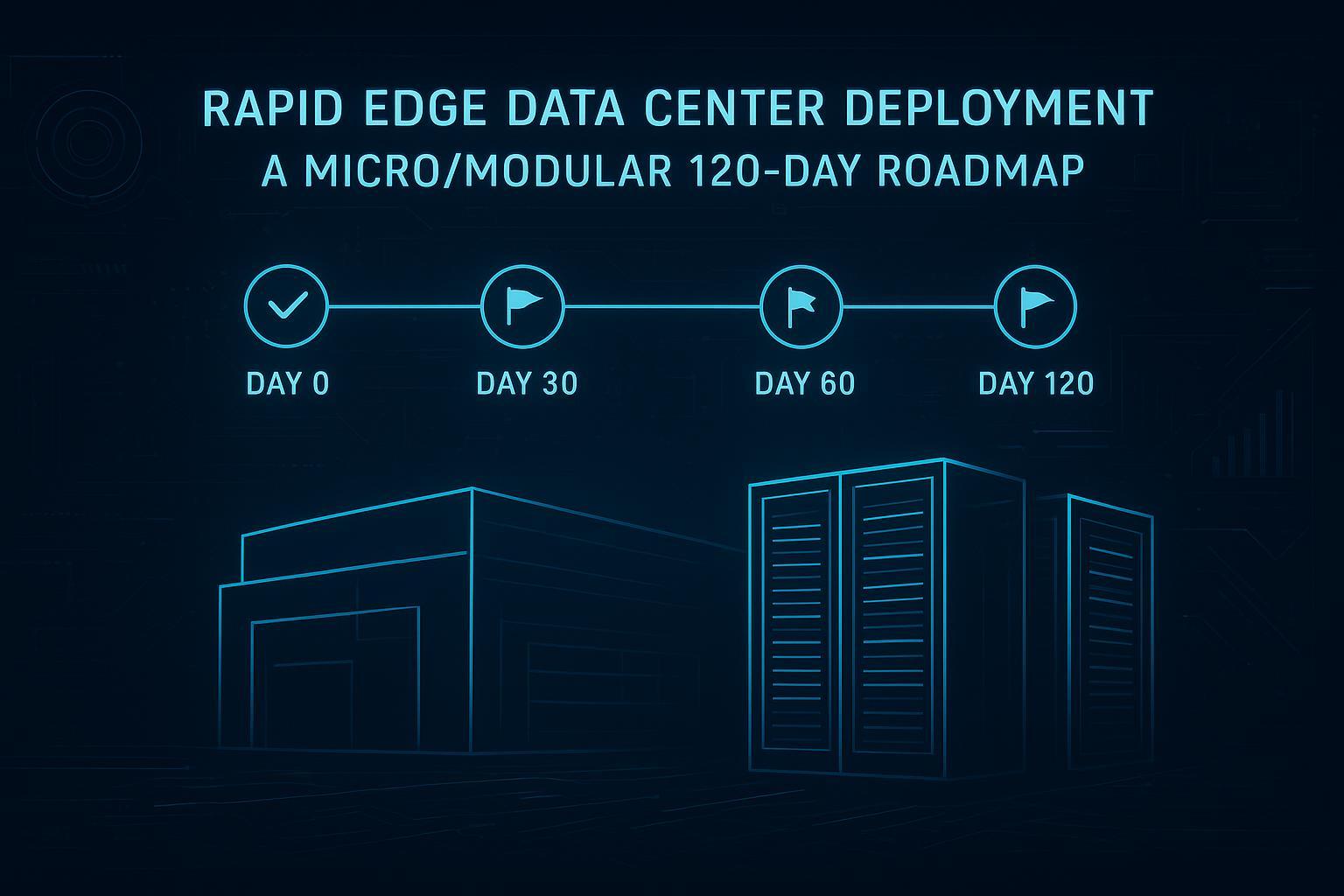

Modular timelines can compress when site preparation and permitting run in parallel with factory build and integration. A staged model is visible in AWS’s modular data center deployment guidance—order → site assessment/site prep → build → delivery → installation and commissioning, with on-site install/commissioning often described as 1–2 weeks once delivery begins if site readiness is met (AWS Public Sector: “Deploying AWS Modular Data Center… delivery and installation”).

Practical implication: modular schedules are won or lost before the modules arrive. If the pad, utility terminations, and access plan aren’t ready, delivery doesn’t equal progress.

Retrofit: the timeline is usually driven by unknowns and sequencing

Retrofitting an existing building can be attractive when land is constrained or you need to reuse an owned asset. The downside is that retrofit schedules have high variance—you’re integrating new power/cooling and controls into an environment with constraints you often only discover midstream.

Alice Technologies describes how data centre upgrade delays can drive significant cost impact and emphasizes the need for planning and flexible scheduling to manage uncertainty (Alice Technologies: “Why data centre upgrade delays are costing millions”).

Practical implication: if you need 4–6 months with high confidence, retrofit is usually the “hard mode” option unless the scope is tightly bounded (e.g., limited electrical redistribution rather than plant-level upgrades).

Risk: where projects slip (and how to de-risk)

For a 4–6 month window, you don’t have time for “we’ll solve it later.” You need to identify the few risks that commonly blow up schedules.

Modular risk checklist

Site readiness: pad dimensions, weight, clearances, utility tie-ins, and local permitting must be complete before delivery.

Long-lead power gear: transformers, switchgear, generators, and UPS capacity can set the pace regardless of modularity.

Commissioning gates: define what “first IT load” requires (e.g., N+1 power path validated, cooling performance under load).

Logistics: crane windows, access routes, and weather constraints are easy to underestimate.

Colocation risk checklist

Inventory reality vs sales promises: verify committed kW, delivery date, and density constraints in writing.

Carrier lead time: treat it as a first-class schedule driver.

SLA boundaries: confirm what the provider is “on the hook” for (power, cooling, temperature/humidity ranges) and how planned maintenance is handled.

Data Center Knowledge specifically flags that new colo customers often underestimate move-in timeline and misunderstand SLA scope and hidden costs—issues that can surface late if you don’t diligence early (Data Center Knowledge: “Four Common Mistakes New Colocation Data Center Users Make”).

Retrofit risk checklist

Unknown conditions: electrical one-lines that don’t match reality, structural constraints, cable pathways, chilled water limitations.

Sequencing with live operations: change windows and downtime constraints can force serial execution.

Code and permitting triggers: modernization may trigger broader compliance work.

Commissioning scope creep: issues discovered during integrated testing can cascade.

⚠️ Warning: In retrofit-heavy projects, the risk isn’t only technical—it’s that your schedule becomes a chain of dependencies you can’t parallelize.

Density: can you really support ≥20 kW/rack?

High-density racks are no longer exotic, but they’re still not “free.” The question is less whether 20 kW/rack is possible and more where the constraints show up.

Colocation density realities

Many facilities can support higher densities, but support is rarely uniform across every cage and every row. Treat these as diligence items:

Per-rack and per-cabinet kW limits (and whether burst or diversity is allowed)

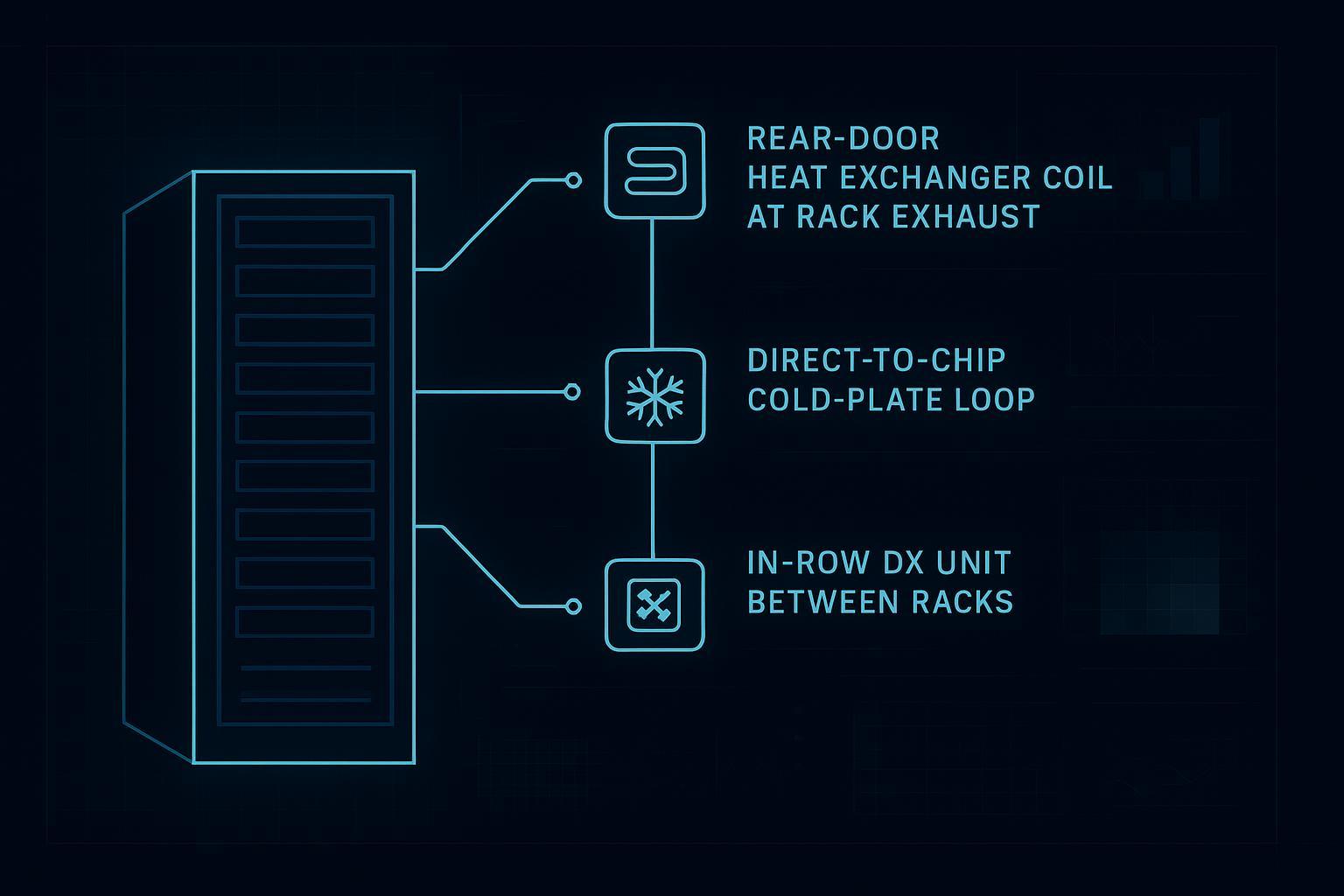

Cooling method available for your space (air, rear-door HX, in-row, liquid options)

Who owns the design for high-density deployment (provider vs tenant)

A neutral framing to keep in mind: colocation generally trades some customization for speed and professional operations, while requiring you to design within the provider’s limits (see Umbrex’s “Types of Data Centers—Industry Taxonomy”).

Modular density realities

Modular architectures can be designed around higher-density blocks—especially if you standardize rack types, containment, distribution, and cooling assumptions. The risk is that “high density” becomes a late requirement; if it’s known upfront, it’s easier to engineer the module design around it.

Retrofit density realities

Retrofits often struggle at 20 kW/rack because the legacy constraints are coupled:

Power distribution may not support per-row or per-rack loads without major rework

Cooling plant capacity may exist, but distribution (CRAH placement, airflow, hot spots, containment) may not

Structural and space constraints limit adding new cooling and distribution equipment

If high-density is mandatory, retrofit may still work—but it tends to be the most engineering-intensive path.

Scalability: what happens after the first 200 kW?

The 4–6 month push is usually just the first milestone. Your choice should account for the next 12–24 months.

Colocation scales well when the provider can deliver incremental kW in the same campus/metro. The risk is inconsistency across sites if you expand into multiple metros with different facility designs and contract terms.

Modular scales well when you standardize your block design (repeatable electrical/cooling/monitoring pattern). Your risk is that the site work becomes the repeat bottleneck.

Retrofit scales worst across a portfolio because each building is its own set of constraints.

Lifecycle cost: the categories that actually move the needle

Avoid false precision here. For most enterprise teams, lifecycle cost is driven by a small number of categories that differ by path:

Colocation lifecycle cost drivers

Recurring fees for space and power (and any demand-based pricing)

Cross-connect and connectivity charges

Remote hands and special services

Contract terms for expansion, early exit, and SLA remedies

Modular lifecycle cost drivers

Capital costs for the block (modules + power/cooling distribution + controls)

Operational staffing model and spares

Maintenance contracts and refresh cycles

Energy efficiency outcomes that depend on your design and operations maturity

Retrofit lifecycle cost drivers

Scope creep from unknown conditions

Temporary measures to keep operations stable during upgrades

Efficiency and reliability improvements vs the cost and risk of disruption

Who should choose which (for a 4–6 month target)

Use this section as a quick self-selection guide.

Choose colocation when…

You can secure committed capacity quickly and your connectivity plan is feasible within the window.

Your priority is speed and operational maturity (security, uptime processes), and you can accept provider constraints.

Choose modular / prefabricated when…

You need more control over design (including higher density assumptions) than colo can reliably offer.

You can run site prep, permitting, and procurement in parallel and you’re ready to manage commissioning gates.

Choose retrofit when…

You must reuse an existing building and the upgrade scope is well-bounded (or you accept higher schedule variance).

You have operational constraints that make migration to colo difficult, and you have strong facilities/commissioning capability.

Diligence checklist: how to de-risk a 4–6 month plan

Treat this as the “procurement-ready” section—questions you can assign to owners.

Colocation diligence (contract + operations)

What is the committed kW available date, and what happens if delivery slips?

What are the per-cabinet and per-row density limits for the specific space you’re contracting?

What’s the realistic lead time for carrier circuits and cross-connects for your carriers?

How do SLAs treat planned maintenance vs unplanned outages, and what are the remedies?

What services are included vs billed (remote hands, install support, after-hours access)?

Modular diligence (schedule + interfaces)

What’s the site readiness checklist (pad, utilities, clearances), and who owns each item?

What are the long-lead components (transformers/switchgear/genset/UPS), and what are the confirmed lead times?

What are the commissioning gates for “first IT load,” and what is the test evidence package?

What compliance documentation is available (certifications, test reports, QA records)?

Retrofit diligence (scope control + commissioning)

What is known vs unknown (as-built accuracy, electrical one-lines, cooling distribution constraints)?

What work must occur in change windows, and what’s the fallback plan if windows are missed?

What permits and code triggers apply, and what are the likely approval lead times?

What is the integrated commissioning plan (and who signs off)?

Next step

If you want a tighter answer for your environment, the most effective next step is a one-page “go-live critical path” built from your constraints (power availability, density targets, and connectivity requirements).

CTA: Request a commissioning & go-live checklist for a 4–6 month plan.

Appendix: Coolnetpower modular reference pages (neutral)

The following pages are provided as product reference links for readers who want an example of modular/micro/containerized solution categories; they are not intended as a claim of universal fit.