Table of Contents

ToggleKey takeaways

“120 days” is mostly a parallelization and standardization problem—not a single construction sprint.

Your schedule usually lives or dies on the same critical path: power availability + long-lead electrical gear + commissioning readiness.

Use gating milestones (design → factory testing → install verification → startup → functional testing → integrated testing → handover) to prevent late surprises.

Build a standardization kit so deployment #2 is meaningfully faster than deployment #1.

What this roadmap covers (and what it doesn’t)

This guide is a timeline-driven playbook for deploying a small edge data center—roughly 0.5–2 MW—using micro data centers (integrated cabinets) and/or modular/containerized infrastructure. If you’re looking for a micro data center deployment roadmap, the steps and gates below are designed to be reused from site to site.

It assumes you’re trying to reach “ready for production workloads,” including monitoring and an operations handover.

What it doesn’t cover: grid-upgrade projects, greenfield substations, and highly bespoke one-off designs. Those can be great projects, but they rarely fit inside a 120-day window.

Quick definitions

Micro data center: an integrated rack/cabinet that bundles power, cooling, and monitoring.

Modular/prefabricated data center: factory-built modules integrated on-site.

Containerized data center: an ISO-container style integrated module.

Commissioning gates: staged verification with clear “go/no-go” evidence.

The 120-day readiness test (Week 0 gate)

Before you publish or commit to a “120-day” date, run a short feasibility gate. It’s better to reset expectations on Day 5 than to explain a slip on Day 95.

Gate: Is 120 days realistic for this site?

Inputs

Target IT load range and rack density (now, and in 12–24 months).

Redundancy target (N, N+1, 2N) and availability expectations.

Power path: who owns it, what’s already available, and what must be delivered.

Site constraints: footprint, access, crane/rigging path, transport restrictions.

Actions

Identify your critical path (usually power + long-lead equipment + commissioning readiness).

Choose a repeatable “standard block” (reference design) rather than custom engineering for each site.

Outputs

Go/No-Go: proceed with a 120-day plan, or move to a phased plan with defined pre-work.

Risk register v0 with named owners.

Done when…

Each gate has a named owner and an evidence list.

Baseline schedule is approved and change control rules are set.

120-day feasibility quick screen

Constraint | Green | Yellow | Red (likely breaks 120 days) | Mitigation example |

|---|---|---|---|---|

Utility power | Existing capacity confirmed | Capacity uncertain | Upgrade/interconnect queue | Phase load, alternate site, temporary power |

Long-lead gear | Allocated/available | Order pending | Lead time exceeds window | Framework agreements, approved alternates |

Permitting/AHJ | Known path | Partial clarity | Novel jurisdiction / opposition | Standardized packages, early AHJ reviews |

Logistics | Clear access | Some constraints | Major transport/crane limits | Smaller modules, route survey, staged delivery |

The 120-day roadmap at a glance (timeline + gates)

This plan works best when you run four parallel tracks:

Site + permits

Utility + electrical

Factory build

IT/ops readiness + commissioning

Milestone map (illustrative)

Day range | Primary objective | Gate | Primary artifacts |

|---|---|---|---|

0–10 | Freeze requirements + reference design | Gate A (planning complete) | Owner requirements, commissioning plan, single points of failure review |

10–30 | Site prep + permits in motion; procurement locked | Gate B (procurement release) | IFC package, permit submissions, long-lead POs |

20–70 | Factory build + factory acceptance testing | Gate C (factory tested) | FAT procedures + reports, QA/QC pack |

45–85 | Delivery + install verification | Gate D (installed and verified) | Installation checklists, as-builts, punch list |

70–100 | Startup/energization + functional tests | Gate E (functional) | Startup logs, functional test results |

95–115 | Integrated systems testing | Gate F (integrated) | IST scripts + results, failover evidence, issue closure |

110–120 | Closeout + handover | Gate G (handover) | O&M manuals, training records, acceptance certificate |

One practical way to structure these gates is the Level 0–6 commissioning sequence described by Construct & Commission in its overview of data center commissioning levels 0–6.

Step-by-step: Day 0 to Day 120 (with gates, risks, and mitigations)

Step 1 (Day 0–10): Freeze the “standard site” requirements

Inputs: load profile, density targets, redundancy, environmental range, security/compliance constraints.

Actions

Convert requirements into a repeatable reference design (even if you’ll allow controlled variants later).

Decide what’s standardized vs. site-variable (for example: voltage, seismic, fire code details).

Outputs: requirements pack, reference architecture v1.

Done when… requirements are approved by IT, facilities, security, and procurement.

Common delay risk: late density changes.

Mitigation: define pre-approved variants (A/B/C) with explicit change control.

Step 2 (Day 0–10): Commissioning Level 0 — plan verification early

This is where many edge data center commissioning levels programs succeed or fail: define gates and evidence before equipment arrives.

Commissioning is not a post-construction formality. It’s how you keep a compressed schedule from turning into a late-stage rework loop.

Inputs: design intent, acceptance criteria.

Actions

Define commissioning responsibilities and evidence requirements for each gate.

Decide what must be proven in factory tests vs. on-site.

Outputs: commissioning plan, test matrix.

Done when… every later gate has an owner, an artifact list, and a sign-off rule.

Step 3 (Day 5–30): Lock the power path (often the true critical path)

Inputs: utility requirements, single-line diagram, transformer/switchgear/UPS requirements.

Actions

Confirm the energization plan and utility milestones.

Release long-lead procurement early (and validate alternates).

Outputs: power plan, utility milestone map, procurement release list.

Done when… the energization date is backed by a credible, written plan—not a hope.

Common delay risk: utility constraints or long lead-time electrical equipment.

Mitigation: approved alternates and phased go-live scenarios (partial load first).

Step 4 (Day 10–30): Permitting/AHJ and compliance mapping (without slowing the factory)

Inputs: jurisdiction requirements, fire/life safety approach, security constraints.

Actions

Submit permits early using standardized packages.

Align documentation with acceptance gates (so artifacts don’t arrive late).

Outputs: permit submission set, compliance checklist.

Done when… there are no “unknown approvals” left on the critical path.

Step 5 (Day 20–70): Factory build + Level 1 (FAT)

In a prefabricated data center commissioning approach, the more you can verify in factory conditions (with repeatable scripts), the less schedule risk you carry into on-site integration.

Inputs: approved drawings, BOM, test procedures.

Actions

Execute factory acceptance tests for power, cooling, monitoring, alarms, and control sequences.

Capture evidence in a form that’s reusable for handover and future sites.

Outputs: FAT reports, QA/QC pack, configuration baselines.

Done when… FAT deviations are dispositioned and the ship-ready baseline is frozen.

Step 6 (Day 25–60): Site preparation in parallel

Inputs: civil drawings, logistics plan.

Actions

Prepare pads/foundations, conduits, grounding, and fiber entry.

Validate crane access and safety plans.

Outputs: “site ready-to-receive” sign-off.

Done when… delivery can happen without rework.

Step 7 (Day 45–85): Delivery + install verification (Level 2)

Inputs: FAT pack, shipping plan, site readiness.

Actions

Place modules and verify installation against drawings and checklists.

Produce as-builts and a controlled punch list.

Outputs: installation verification records, as-builts, punch list.

Done when… punch list is within an agreed threshold and doesn’t block startup.

Step 8 (Day 70–100): Startup/energization (Level 3)

Inputs: energization plan, safety procedures.

Actions

Energize systems and validate settings and interlocks.

Confirm monitoring baselines.

Outputs: startup logs, settings validation.

Done when… stable energized operation is maintained for a defined duration.

Step 9 (Day 75–105): Functional testing (Level 4)

Inputs: functional test scripts, alarm matrix.

Actions

Test operating modes, alarms, sequences.

Train the facilities/ops team on normal operation.

Outputs: functional test reports, training records.

Done when… functional requirements are met and issues are closed or formally accepted.

Step 10 (Day 95–115): Integrated Systems Testing (Level 5)

This is where fast builds most often fail: each subsystem works, but the system-of-systems doesn’t behave under stress.

Inputs: IST scripts, load-bank plan.

Actions

Test cross-system events (loss of utility, failover behavior, thermal excursions).

Validate recovery procedures and operational decision thresholds.

Outputs: IST results, resilience evidence, updated runbooks.

Done when… go-live criteria are achieved and signed off.

Step 11 (Day 110–120): Closeout + operations handover (Level 6)

Inputs: final documentation, warranty, spares list.

Actions

Deliver the handover package (O&M manuals, as-builts, warranties).

Complete training, walkthrough, and lessons learned.

Outputs: handover pack and acceptance certificate.

Done when… the operations team can run the site without the build team.

Risk register: what usually delays a “fast” deployment

Most schedule slips are predictable if you track them early and assign owners.

Risk | Where it hits | Early warning signal | Mitigation | Owner |

|---|---|---|---|---|

Power not ready | Steps 3/8 | Utility milestones slip | Phase load; alternate feeds | PM |

Long-lead electrical gear delay | Step 3 | Vendor lead times slip | Alternates; pre-buy | Procurement |

AHJ rework | Step 4 | Repeated comments | Pre-submission reviews | Compliance |

Integration defects | Steps 9–10 | Repeat failures | Expand FAT; earlier fixes | Cx lead |

Site logistics disruption | Steps 6–7 | Access restrictions | Route surveys; staged delivery | Site lead |

The standardization kit (so deployment #2 is faster than deployment #1)

A 120-day roadmap is easier to repeat when the artifacts are repeatable. Think of this as a modular data center standardization kit: a set of controlled templates and test evidence you can carry from site to site.

What to standardize vs. what to localize

Standardize: reference design, module interfaces, test scripts, monitoring baselines.

Localize: voltage, seismic, fire code, noise limits, network carriers.

Standardization kit checklist

Reference architecture pack

single-line diagrams

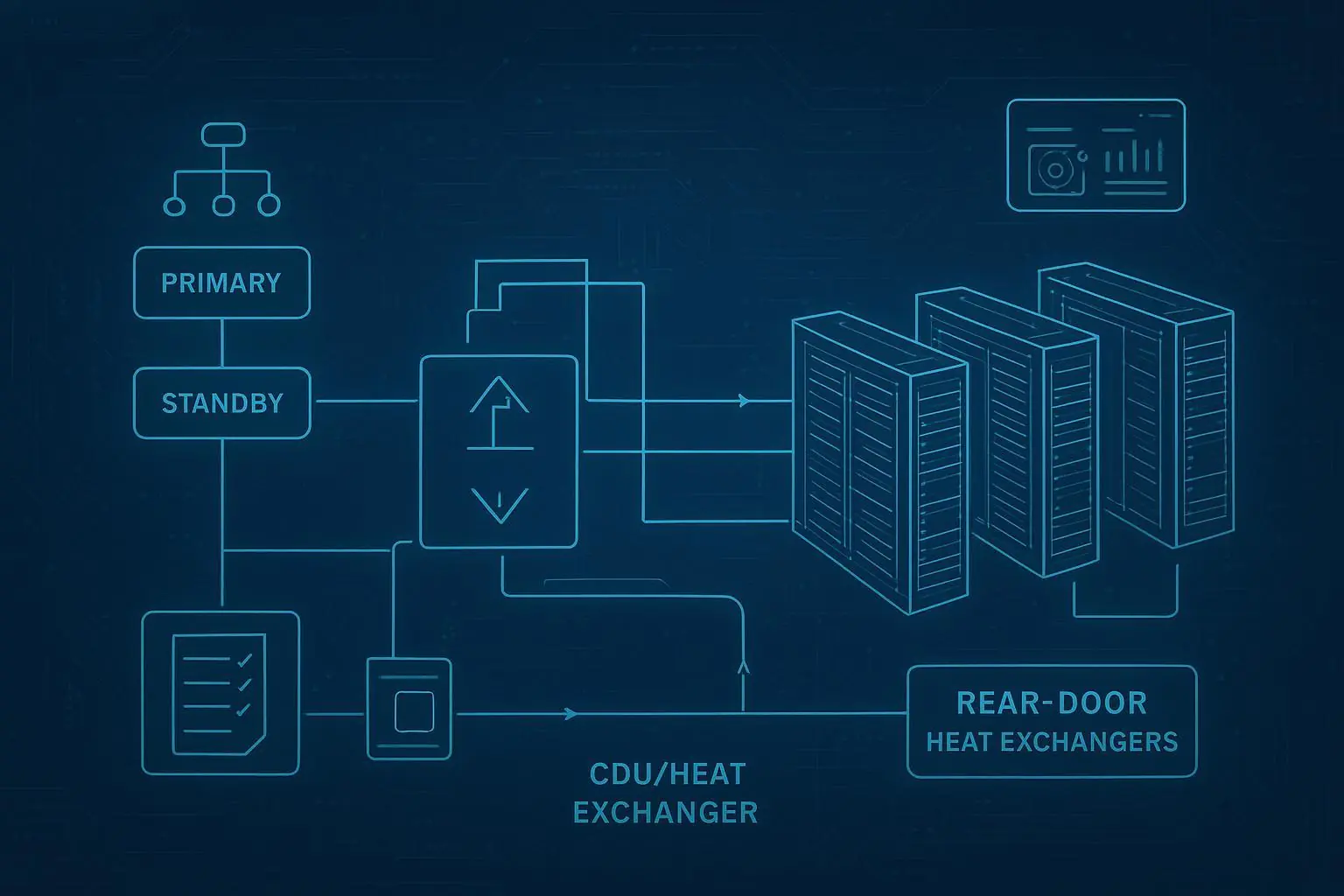

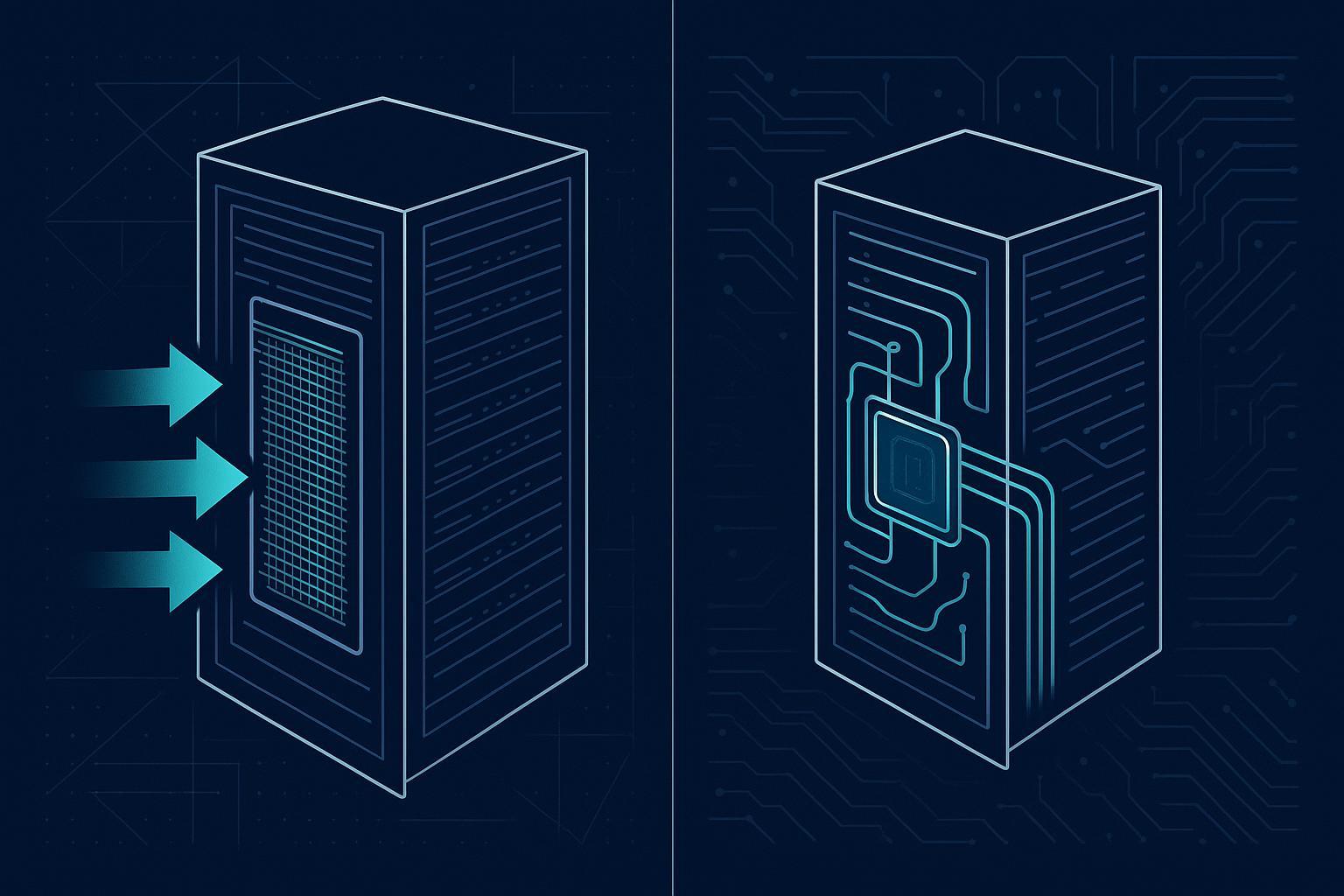

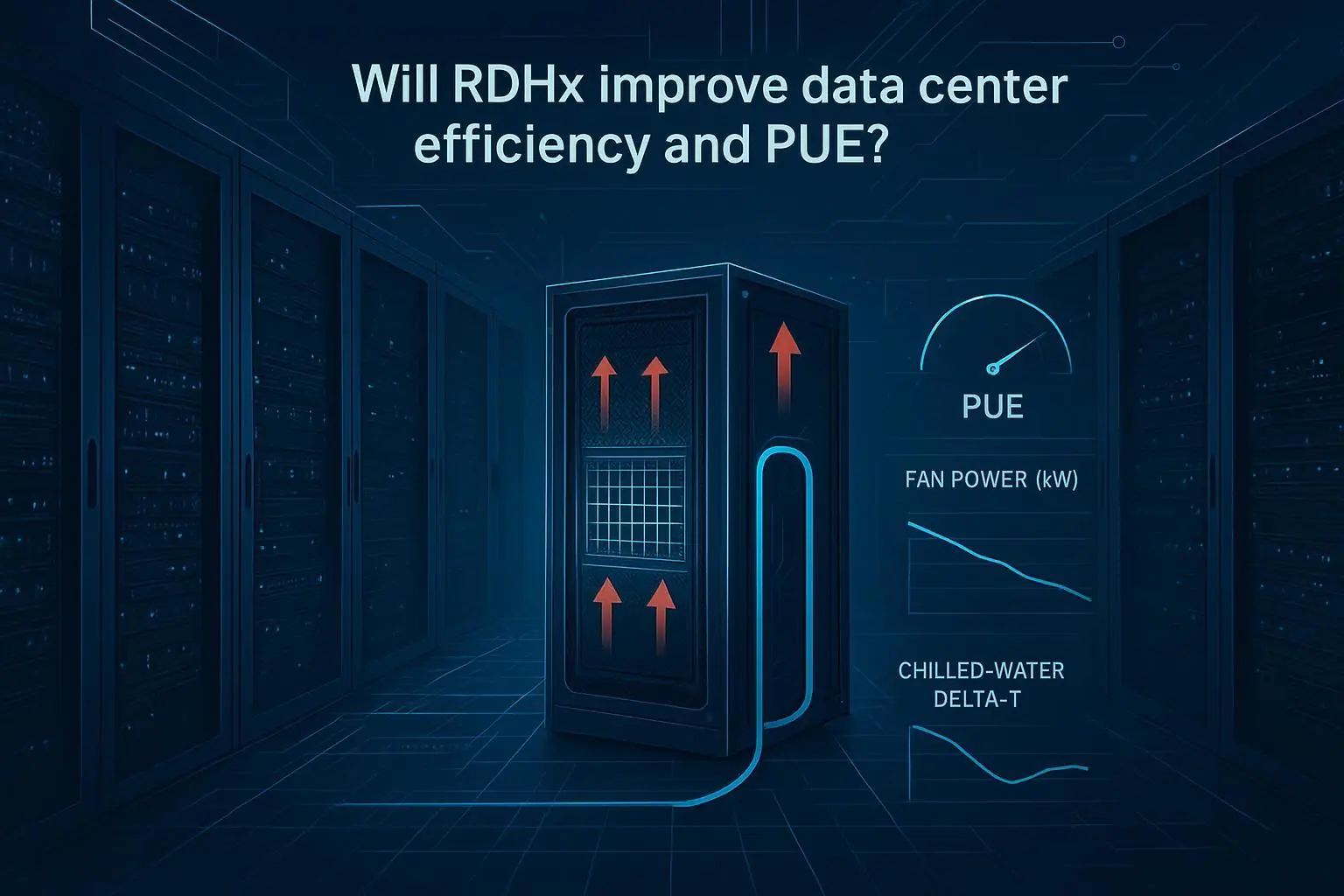

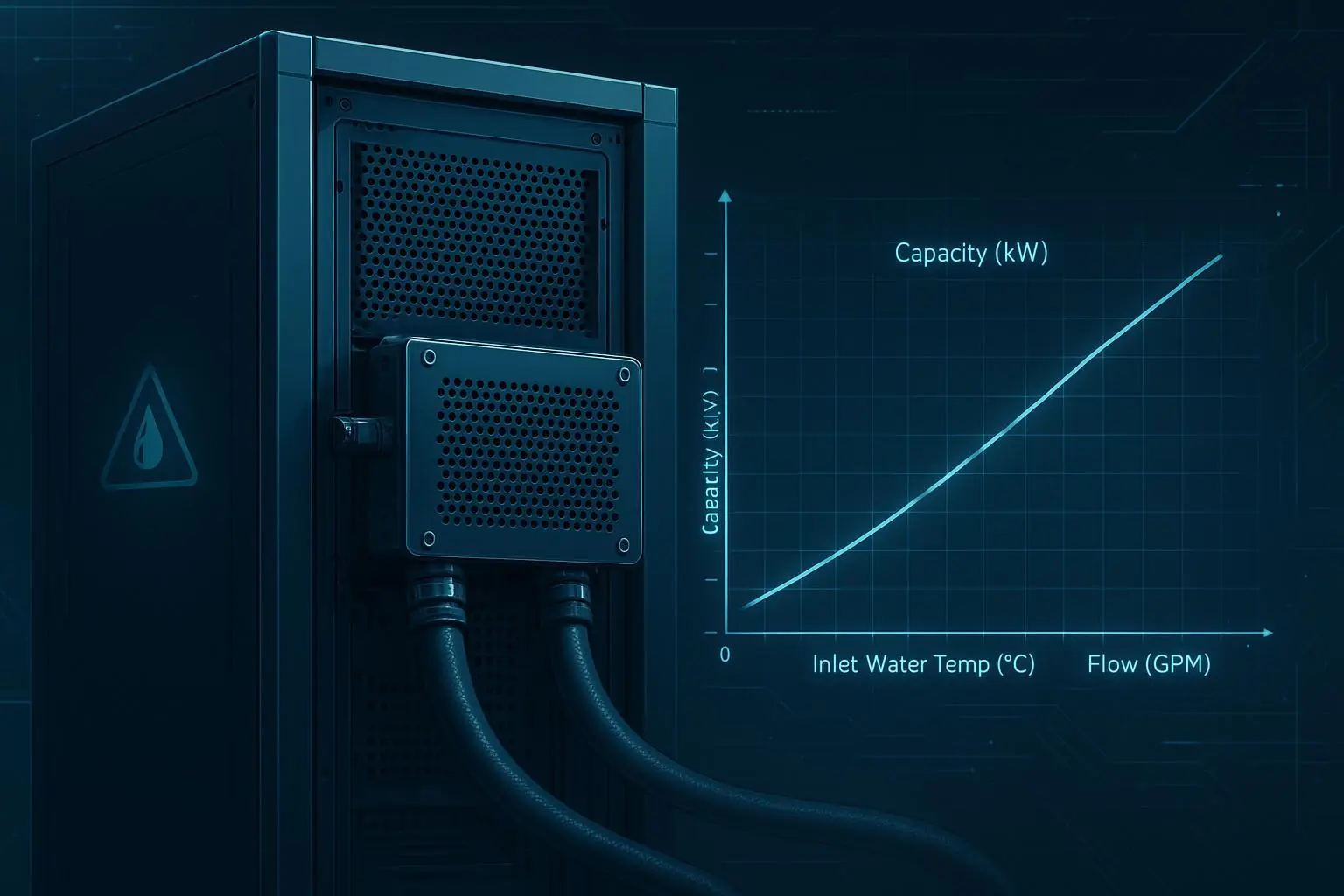

cooling topology options

monitoring/alarm baseline

interface definitions between modules (electrical/mechanical/network)

BOM & procurement pack

standard BOM template

approved alternates

long-lead tracker

Permitting/AHJ pack

standard drawing set

compliance mapping

submittal checklists

Commissioning pack

gate checklist aligned to Levels 0–6

FAT/SAT/functional/IST scripts + acceptance criteria

issue log template + closure rules

Operations pack

monitoring thresholds

SOPs for common alarms

spares list and maintenance calendar

training checklist + handover sign-offs

Program management pack

schedule template with decision gates

risk register template

change control template

Neutral sidebar: Example provider (Coolnetpower)

Example provider (neutral): Some teams accelerate delivery by working with an integrated provider that can supply modular/micro/containerized blocks plus power, cooling, and monitoring—reducing multi-vendor integration overhead.

Coolnetpower is one example of this type of provider. For reference, its integrated solution lines include MetaRow modular data centers, MetaRack micro data center cabinets, and MetaCuber containerized data centers.

If you’re building your own documentation baseline, pages like the utility hookups and permitting checklist and modular compliance and resilience best practices can be useful references for how to structure risk controls and gate evidence.

Next steps

If you want a practical starting point, use this roadmap to build two artifacts first:

A one-page “Week 0 readiness test” checklist.

A standardization kit folder (BOM template, commissioning scripts, and acceptance criteria).

If you’d like, we can also turn this into a site-ready package: a gate checklist, a risk register, and a vendor RFP section you can reuse across locations.