If you’re a distributor or SI building client-ready packages for AI and high-density deployments, “AI-driven thermal optimization” is only useful if it’s integratable, auditable, and safe.

This review is written for procurement and supply-chain leads who need to shortlist vendors without relying on vague outcome claims. We’ll focus on what Coolnetpower’s stack covers (thermal optimization + liquid cooling + DCIM/monitoring), how it integrates into real facilities, what evidence you should request (when public metrics and named references aren’t available), and how to de-risk the decision with a structured on-site PoC.

Table of Contents

ToggleVerdict (one sentence)

Coolnetpower is a strong shortlist candidate when you need an integrated cooling + monitoring/DCIM foundation and a supervisory optimization layer that can be verified through commissioning artifacts (point lists, logs, and acceptance criteria) rather than marketing promises.

Who this is for (and who it isn’t)

Shortlist Coolnetpower if you’re…

packaging repeatable high-density / AI retrofit programs for multiple client sites

operating mixed environments (air + liquid, phased upgrades) and can’t afford a rip-and-replace approach

willing to run a PoC with clear constraints, change control, and measurable acceptance checks

Be cautious if you’re…

expecting public, pre-published performance guarantees (those require site-specific measurement programs and contractual terms)

unable to instrument, tag, and validate telemetry (no data readiness = no credible optimization)

unwilling to define “who owns what” across BMS/DCIM/controls teams

Key takeaways

Coolnetpower’s offering is best understood as an integrated stack: cooling + optional liquid cooling + DCIM/monitoring + an AI-driven supervisory optimization layer.

For distributors/SIs, the practical differentiator is delivery readiness: point lists, alarm ownership, rollback behavior, commissioning artifacts, and operator training.

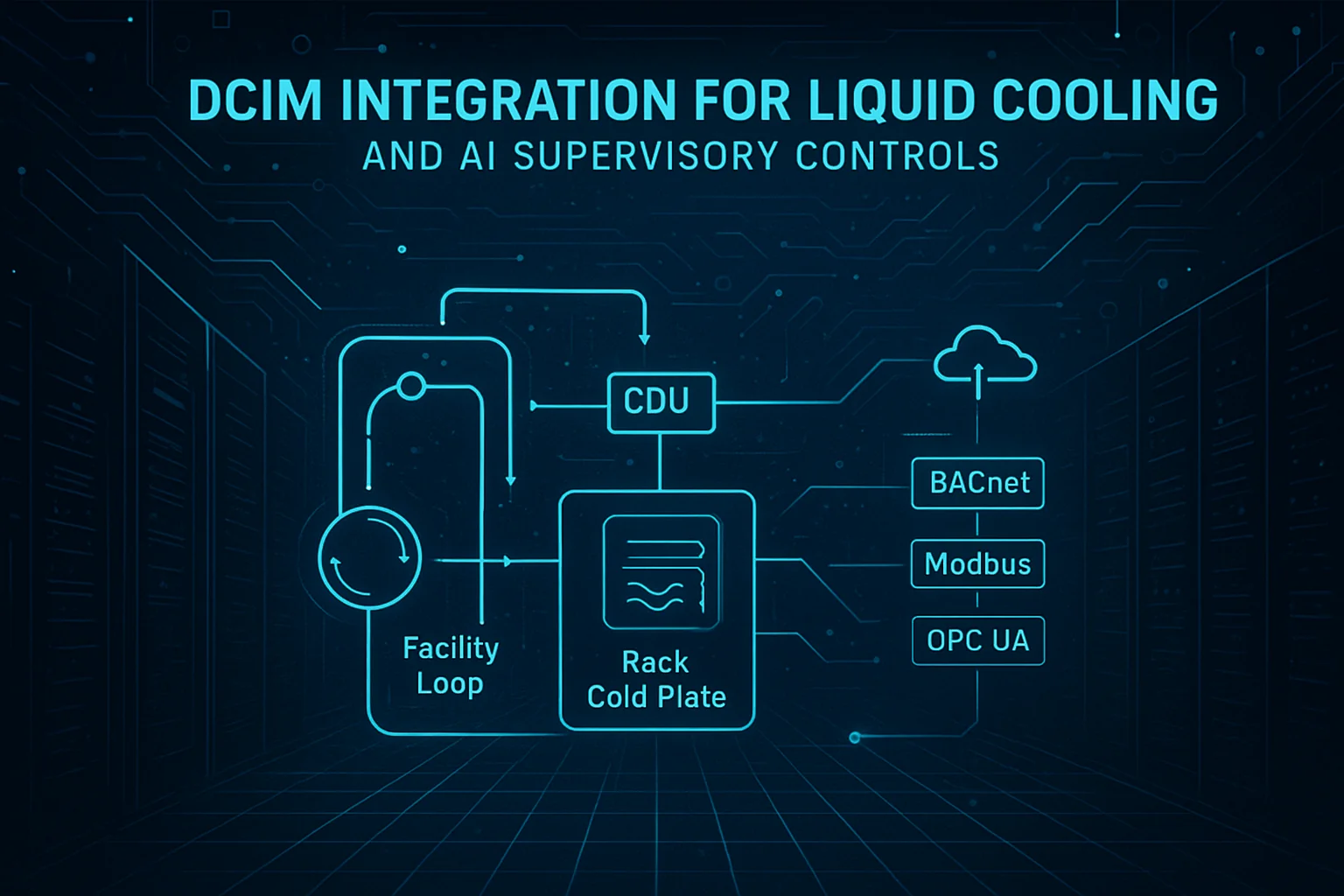

Integration openness matters most at the interfaces you’ll touch: BACnet at the BMS layer and Modbus across power and many plant components.

You can validate value without hype by demanding a PoC acceptance criteria pack (safety envelopes, control conflict checks, audit logs, and rollback tests).

Lock-in risk is usually visible early: if you can’t export trends/events and can’t trace decisions back to inputs, you’re buying a black box.

AI-driven thermal optimization platform evaluation criteria (procurement checklist)

Use this as a shortlist filter when you’re comparing vendors or packaging an SI offering for multiple end clients. It’s structured around what creates real project risk: integration scope, controls safety, documentation, and handover.

Evaluation dimension | What to ask | What good evidence looks like | What to watch for |

|---|---|---|---|

Scope clarity | Which assets/loops are in scope (air + liquid + power + DCIM)? | A written boundary diagram + point list | ‘End-to-end’ claims with no boundary model |

Openness & interfaces | Exactly which interfaces use BACnet/Modbus? | Protocol map + sample register/point list | ‘Supports X’ with no point definitions |

Read vs. write governance | What is read-only vs closed-loop control? | A staged plan with a write-enable gate | Immediate closed-loop without baseline |

Safety constraints | What constraints are enforced (ASHRAE envelopes, rate limits, deadbands)? | Documented constraint set + rollback test | ‘AI will keep it safe’ without constraints |

Auditability | Can we trace decisions back to inputs? | Change logs + decision traces + exports | No audit trail; black-box outputs |

Data readiness | What telemetry is mandatory vs optional? | Data QA checklist + tagging schema | ‘We can work with any data’ |

Commissioning | How is the PoC commissioned and verified? | Test scripts + acceptance criteria tables | ‘We’ll tune it onsite’ with no plan |

Ops handover | What training and SOP/MOP updates are included? | Training plan + handover pack | No operator enablement |

Support model | Who supports after go-live (remote/on-site, response times)? | Support plan + escalation model | Undefined responsibilities post-handover |

For a practical view of what data and tagging quality matter most before optimization, reference Coolnetpower’s internal data-readiness checklist (linked later in this article).

What “AI-driven thermal optimization” means in practice (and what it shouldn’t do)

Most buyers don’t need a machine-learning explainer. They need crisp answers to:

Where does this sit in the control stack?

What prevents it from breaking things when conditions change?

Supervisory optimization vs. controller replacement

In mature deployments, AI-driven thermal optimization is a supervisory layer that uses live telemetry, analytics, and constraints to recommend (or apply) bounded adjustments. It should not replace your local safety logic.

A practical model:

Local controllers (PLC/DDC/VFD logic, CDU controllers, CRAH controls, etc.) keep core safety and loop stability.

Supervisory optimization correlates signals across systems and proposes adjustments that reduce waste (for example, avoid overcooling or conflicting setpoints).

Procurement implication: a vendor that can’t describe boundaries (read vs. write points, override behavior, rollback, and alarm ownership) is a vendor that will create integration and commissioning risk.

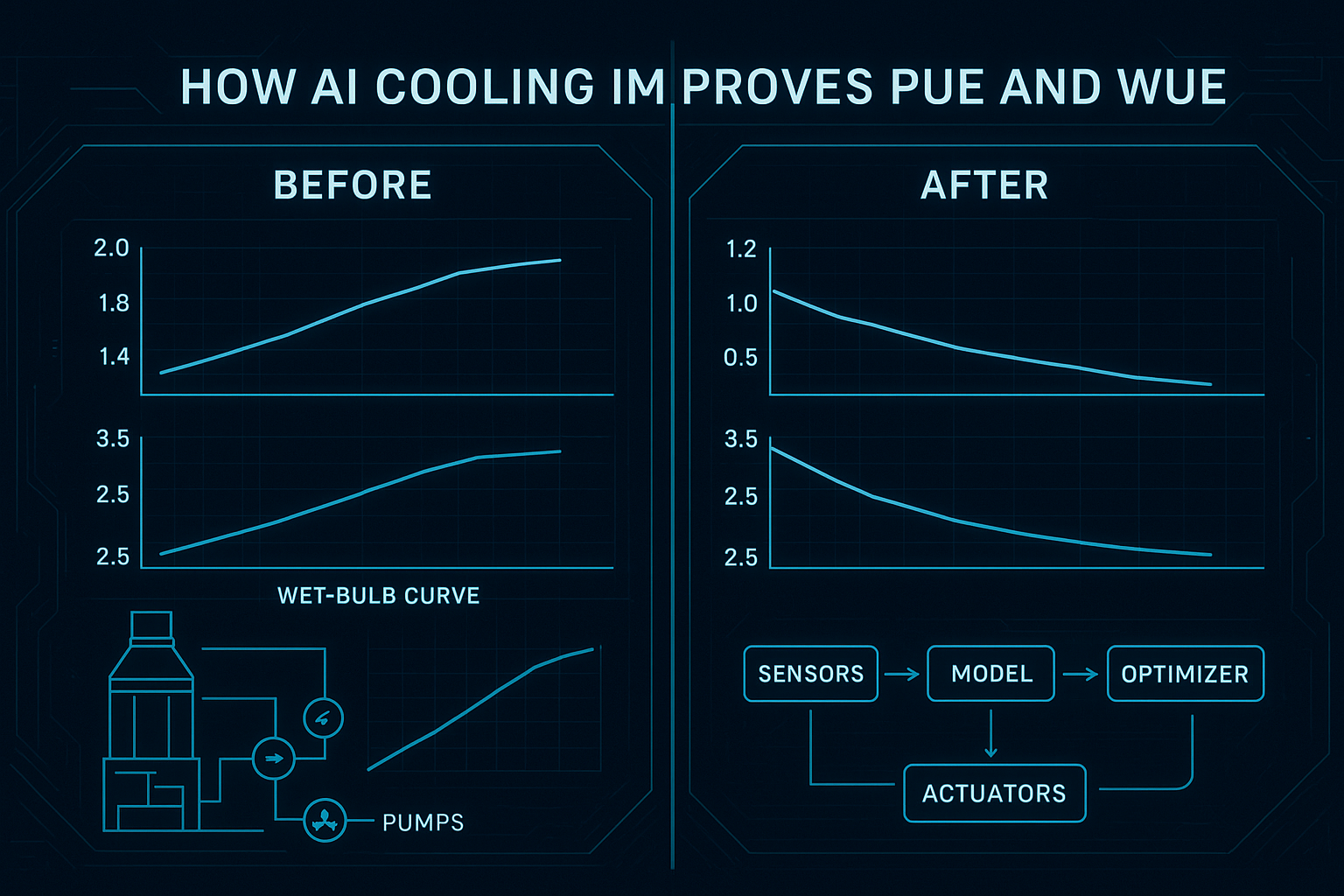

The metrics buyers care about: PUE and WUE (definitions, not promises)

Two metrics show up in almost every modern data center thermal optimization RFP:

PUE (Power Usage Effectiveness): total facility energy divided by IT equipment energy, as defined by The Green Grid’s “PUE: A Comprehensive Examination of the Metric”.

WUE (Water Usage Effectiveness): a standardized KPI specified in ISO’s listing for ISO/IEC 30134-9 (WUE).

This article does not publish site outcome numbers. Instead, it shows you how to verify whether a platform can influence the drivers behind PUE/WUE without compromising reliability.

Safety guardrails: the ASHRAE thermal envelope

Any optimization story that ignores environmental envelopes is incomplete. As a baseline, require alignment to the recommended/allowable classes and limits summarized in the ASHRAE TC 9.9 “Thermal Guidelines” reference card (5th ed.).

Pro Tip: In your PoC, write the envelope constraints into acceptance criteria. “We operate safely” is not a test.

Coolnetpower capability snapshot (what you’re actually buying)

For procurement-grade evaluation, separate capabilities from proof artifacts.

Monitoring + DCIM foundation

Coolnetpower’s DCIM/monitoring layer is positioned as an operational foundation: asset views, environmental and power monitoring, alarms/events, and remote management.

Relevant references:

What you should ask to see in a demo (because it impacts deployment scope and cost):

alarm taxonomy and routing model (who gets paged, and when?)

asset naming conventions and tag model

trend retention, export formats, and audit log visibility

role-based access and approval workflows

AI thermal optimization functions (capability-level)

At a capability level, an AI-driven thermal optimization platform should support:

hotspot detection and risk prediction (early warning before excursions become incidents)

setpoint optimization under constraints (reduce unnecessary margin; avoid oscillation)

cross-domain correlation (connect IT load changes to thermal response)

Coolnetpower’s own framing of the closed-loop architecture is described in AI-driven thermal optimization for green data centers.

Operations enablement (often the real differentiator)

SIs win deals when delivery is repeatable. For procurement, “repeatable” means the vendor can provide:

commissioning workflow and test scripts

change control model (approval gates, rollback, versioning)

training plan (operator, controls engineer, commissioning)

handover artifacts (as-built maps, point lists, SOP/MOP updates)

If these are vague, downstream costs won’t be.

DCIM integration for thermal optimization (what to insist on)

Many “AI data center cooling” proposals fail at the same point: integration details.

Your goal is to eliminate two common failure modes:

Point ambiguity: unclear read/write point definitions and tagging.

Ownership ambiguity: unclear responsibility when alarms trigger or controls conflict.

Where Modbus typically shows up

In many data centers, Modbus is common across:

power meters and some UPS/PDU telemetry

plant equipment interfaces (varies by vendor/deployment)

some cooling subsystems and skids

Where BACnet typically shows up

BACnet is widely used at the BMS layer for:

air handling and room cooling equipment interfaces

schedules and supervisory setpoints

status/command points across HVAC components

The exact architecture differs by site, but procurement can still demand one consistent deliverable: a point list and boundary model.

Integration deliverables to request (RFP-ready)

Require a mapping table as an attachment to any proposal:

System / layer | Protocol | Read signals (examples) | Write points (examples) | Ownership boundary | Fail-safe / rollback |

|---|---|---|---|---|---|

BMS / HVAC supervisory | BACnet | temps, humidity, fan status, valve positions | bounded setpoint adjustments (if allowed) | BMS retains safeties | revert to baseline schedules |

Power chain monitoring | Modbus | kW, kWh, breaker states, UPS status | typically none | power systems owner | read-only in early PoC |

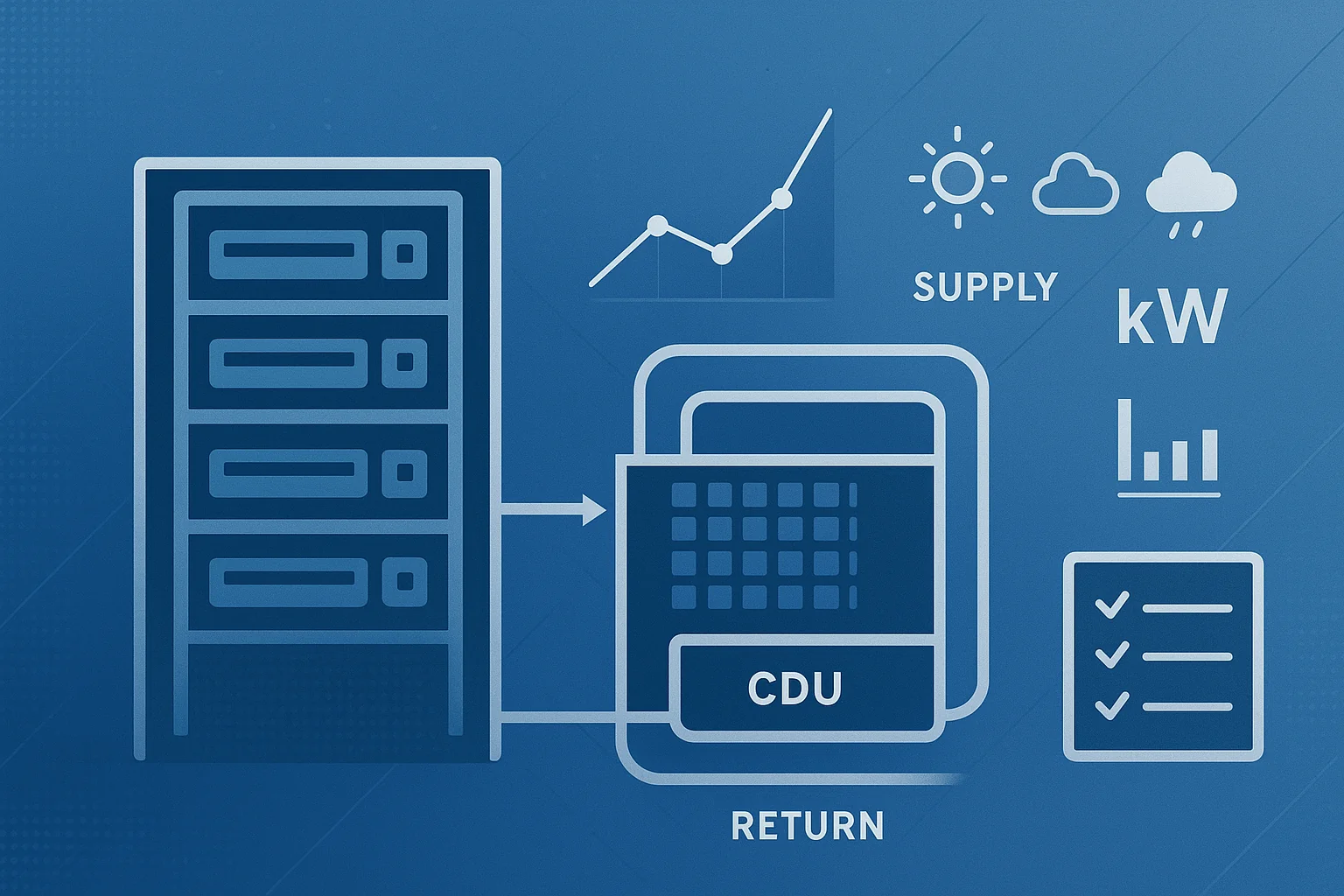

Liquid loop / CDU | vendor-dependent | supply/return temps, pump state, alarms | bounded controls (if integrated) | CDU owns loop safety | local control precedence |

DCIM / monitoring | protocol/API mix | alarms, events, trends | notifications, integrations | DCIM admin | export + audit logs |

Key Takeaway: If the vendor can’t provide this table early, you can’t price risk.

Liquid cooling integration (where a lot of risk lives)

High-density compute changes the thermal equation. For many sites, liquid cooling arrives before operational practices are ready for it.

From a partner delivery perspective, the best thermal optimization platform story is one that doesn’t ignore liquid loops.

What “liquid cooling monitoring and control” should mean (practically)

Even if you keep control loops local, procurement should expect the monitoring/optimization layer to support:

supply/return temperature observation

flow indicators (where instrumented)

pump/valve state monitoring

alarms signaling risk (pressure excursions, leak detection systems, etc.—site dependent)

The key isn’t naming every sensor. It’s making gaps explicit and testable.

Hybrid reality: air + liquid will coexist

Most sites run hybrid environments for years: some rooms or rows remain air-cooled while AI zones adopt direct-to-chip or other liquid solutions.

Coolnetpower’s liquid cooling framing can be used as a terminology reference for stakeholders and procurement documentation:

Coordination points to clarify before commissioning

SIs should push for explicit agreement on:

alarm ownership and escalation paths

change windows for optimization actions

rollback sequencing and “safe mode” behavior

If the answer is “we’ll figure it out onsite,” the cost will show up in commissioning hours.

Proof without published metrics: what to request (and what “good” looks like)

If you can’t publish numeric outcomes and can’t name customers, you can still run a procurement-grade evaluation by requiring a consistent evidence package.

Evidence pack checklist

Use this checklist to standardize vendor responses:

Trend package: timestamped trends for temperatures, key setpoints, and cooling states

Change log: what changed, when, why, and who approved it

Alarm/event logs: before/after during relevant change windows

As-built integration map: points, protocols, ownership, network zones

Rollback test record: documented revert-to-baseline procedure and results

Operations pack: SOP/MOP updates plus training completion records

⚠️ Warning: A platform that can’t provide audit logs and change traces is hard to defend in a multi-stakeholder procurement process.

How to evaluate PUE/WUE impact without publishing numbers

Even if you never publish the number, you can validate whether the platform affects PUE/WUE drivers by checking:

whether thermal safety margins are reduced without increasing excursions

whether control conflicts are reduced (fewer oscillations, fewer competing setpoints)

whether cooling is better aligned to actual IT load patterns

Coolnetpower’s internal explanation of the mechanism is a useful cross-reference for the engineering team:

Integration test questions to include in your PoC (make it measurable)

If you want procurement-grade confidence, write the integration tests into the PoC—not as “nice to haves,” but as pass/fail checks. Below are tests that are simple to run and hard to fake.

Point mapping and tagging tests

Test: Spot-check 30–50 critical points (temps, setpoints, device states) end-to-end from source system → monitoring/analytics layer.

Pass condition: point names match the agreed dictionary; timestamps are time-synced; units are consistent; missing values are explicitly flagged.

Read/write boundary tests

Test: Demonstrate a “read-only” mode with no write capability enabled, then show the formal gate to enable writes.

Pass condition: write-enable requires explicit approval; every write generates an audit log entry with who/when/why.

Control conflict detection

Test: Create a scenario where two systems could fight (for example, a BMS schedule change while optimization is active).

Pass condition: the platform detects the conflict and defaults to the agreed owner (usually BMS/local controls), with alarms and traceability.

Rollback / safe-mode drills

Test: Trigger a rollback drill during a controlled window.

Pass condition: the site returns to baseline controls within the agreed time, and the rollback procedure is repeatable.

A simple on-site PoC plan (timeline + acceptance criteria)

Below is a procurement-friendly PoC that an SI can quote and deliver.

Timeline (example: 6–10 weeks)

Phase | Typical duration | What happens | Deliverables you can audit |

|---|---|---|---|

Scope + data readiness | 1–2 weeks | define zone, confirm signals, define constraints | point list draft, data QA checklist |

Integration + tagging | 1–2 weeks | connect sources, normalize names, validate time sync | as-built integration map, tag dictionary |

Observation mode | 2–3 weeks | read-only monitoring, establish baseline patterns | baseline trend pack, anomaly list |

Constrained optimization | 2–3 weeks | bounded changes under change control | change logs, rollback test results |

Reporting + handover | 1 week | summarize evidence and operational impacts | PoC report, SOP/MOP updates, training sign-off |

Acceptance criteria (example)

Criterion | How measured | Pass / fail rule | Evidence artifact |

|---|---|---|---|

Safety envelope compliance | inlet temps/humidity vs defined limits | no unapproved violations | trends + alarms |

Control conflict avoidance | oscillation checks, competing setpoints | no persistent conflict patterns | control logs |

Rollback works | simulated override scenario | revert to baseline within agreed time | rollback test record |

Alarm ownership works | alarm routing test | correct team receives and acknowledges | ticket/alert logs |

Operator readiness | training completion | required roles complete training | training records |

If you want a one-page “PoC acceptance criteria pack” as a downloadable asset, this table is already the backbone.

Compliance and documentation for procurement (certifications + approval pack)

Procurement teams don’t buy “trust.” They buy documentation.

Certifications/standards to verify

For this post, the correct procurement-safe wording is:

ISO 9001 / ISO 14001 / ISO 27001 — available in the compliance pack upon request

CE / UL / RoHS — available in the compliance pack upon request

Compliance pack checklist (what to request)

certificates and scope statements (what entities/sites are covered)

product compliance declarations relevant to your region

test reports where applicable

supplier quality process summary and change notification policy

Documentation that accelerates approvals

Ask for a standardized delivery pack:

datasheets (cooling, liquid cooling components, monitoring)

wiring and network diagrams

protocol maps and point lists (BACnet/Modbus)

commissioning checklist and test scripts

spares/parts plan and response SLAs

training plan and handover documents

Openness, standards, and avoiding vendor lock-in

“Open” is only meaningful if it’s testable.

What openness should mean (practical definition)

documented point lists for BACnet/Modbus integration

exportable trends and event logs (for reporting and audits)

clear separation of responsibilities (BMS vs supervisory optimization)

Vendor lock-in red flags

proprietary-only gateways with no documented point model

control actions you can’t trace back to inputs (no audit trail)

inability to export historical data in usable formats

If you see these early, it’s usually cheaper to stop than to “fix it later.”

Pros, cons, and fit guidance (detailed)

This is the section most partner/procurement readers use to make a shortlist decision.

Pros (why Coolnetpower can be a strong SI/distributor fit)

Integrated stack reduces coordination overhead

A single vendor covering cooling, optional liquid cooling components, and monitoring/DCIM reduces interface risk and “vendor A vs vendor B” deadlocks.

For partners, that often translates into fewer subcontractor boundaries and fewer late-stage integration surprises.

A supervisory optimization posture fits real facilities

Most sites can’t afford disruptive control migrations.

A supervisory layer that respects existing controllers is easier to deploy, govern, and audit.

Evidence-based procurement is achievable without public outcome numbers

The required artifacts (point lists, logs, rollback tests, acceptance criteria) let you verify operational impact in your own site context.

This is often a better procurement posture than relying on marketing benchmarks that may not match your climate, load profile, or constraints.

Clear integration standards focus: DCIM integration via BACnet/Modbus boundaries

Predictable scoping is a procurement advantage.

If you can standardize your point model and ownership boundaries across projects, you reduce delivery variance.

Partner delivery readiness (what to look for in practice)

repeatable PoC plan

documented change governance and rollback

training and handover assets aligned to ops realities

Cons / trade-offs (what could slow results or increase hidden cost)

Data readiness is the gate—and it’s not optional

If sensor coverage is incomplete, timestamps drift, or tagging is inconsistent, optimization becomes guesswork.

The practical solution is to treat data readiness as a contractual scope item with pass/fail checks.

Integration effort varies widely by “what you already have”

Two sites can both “have BACnet,” but differ in: naming standards, segmentation rules, permission models, and change governance.

Expect real effort in mapping, validating, and documenting the interface—not just “connecting.”

Closed-loop optimization requires change control maturity

If your client cannot define who approves changes, when changes are allowed, and how rollbacks are executed, you’ll be stuck in observation mode.

That’s not a vendor failure; it’s an operational readiness gap that must be addressed.

Liquid cooling increases stakeholder count and commissioning complexity

The moment you touch CDUs and liquid loops, you add owners (mechanical, facilities, IT, and sometimes OEMs).

Procurement should price for additional integration workshops, test windows, and acceptance checks.

Lock-in risk is mostly about auditability, not protocol logos

A vendor can support Modbus/BACnet and still behave like a black box if the decision trace isn’t visible.

Make exportability and traceability explicit requirements.

Best fit / not best fit

Best fit:

distributors/SIs delivering repeatable AI-density upgrade packages

sites planning hybrid (air + liquid) thermal architectures

organizations able to run a structured PoC with documented acceptance criteria

Not the best fit (or needs extra planning):

sites unwilling to instrument/validate data and define control boundaries

procurement processes that require hard public guarantees up front without allowing a PoC

Training and handover: what good looks like for ops teams

Procurement teams often underestimate this: thermal optimization only sticks if operators trust it. In practice, that requires training that is specific to your control boundaries and your alarms—not generic product training.

Minimum training tracks (partner-ready)

Operator track (day-to-day): dashboards, alarms, what actions are automated vs manual, and what to do during excursions.

Controls/commissioning track: point mapping, constraint configuration, change windows, and rollback drills.

Operations documentation track: how SOP/MOP documents are updated, approved, and versioned.

Handover artifacts to require

As-built point list + tag dictionary

Alarm taxonomy and escalation matrix

Change control and rollback runbook

PoC final report with acceptance criteria results

If a vendor can’t deliver these artifacts cleanly, the SI ends up owning the ambiguity—and the cost.

Next steps

Two low-friction actions reduce procurement risk fastest:

Request a BACnet/Modbus integration point list template + evidence pack checklist.

Run a 6–10 week on-site PoC using the acceptance criteria above, then decide based on artifacts—not adjectives.

For teams who want an internal evaluation framework before engaging vendors, start with: Data requirements for AI thermal optimization: a practical checklist.

FAQ (procurement questions that come up every time)

Can Coolnetpower integrate with our existing liquid cooling?

Yes—if you treat it as an integration scope item. The practical question isn’t “can it,” but which signals are available from your CDUs/loops, via which interface, and who owns safeties. In procurement terms, require the point list, boundary model, and rollback drill in the PoC.

What PUE/WUE improvements has Coolnetpower delivered?

This article intentionally does not publish site outcome numbers. The procurement-safe way to evaluate impact is to run a constrained PoC and review the evidence pack: trends, change logs, conflict checks, and envelope compliance. If a vendor can’t provide auditable artifacts, the numbers won’t be defensible anyway.

Can we run a risk-controlled on-site PoC?

Yes. Use the 6–10 week plan and acceptance criteria tables above. The most important risk control is a staged rollout (read-only → constrained optimization) and a tested rollback procedure.

What training should we require for our ops team?

At minimum: operator workflow training, controls/commissioning training, and documented SOP/MOP updates—plus an escalation matrix. If these are missing, adoption usually stalls after the PoC.

How do we avoid vendor lock-in?

Make openness testable: insist on exportable trends/events, a documented point model, an auditable change log, and a clear boundary statement that keeps local safeties local. Protocol logos alone don’t prevent lock-in.